Hachyderm Community Documentation

Welcome to Hachyderm!

Here we are trying to build a curated network of respectful professionals in the tech industry around the globe. Welcome anyone who follows the rules and needs a safe home or fresh start.

We are hackers, professionals, enthusiasts, and are passionate about life, respect, and digital freedom. We believe in peace and balance.

Safe space. Tech Industry. Economics. OSINT. News. Rust. Linux. Aurae. Kubernetes. Go. C. Infrastructure. Security. Black Lives Matter. LGTBQIA+. Pets. Hobbies.

Quick FAQs to Get Started

How do you pronounce “Hachyderm”?

Pronounced hack-a-derm like pachyderm. (Audio link.)

(What is a pachyderm?)

Running a service is expensive. How can I donate?

There are a few ways to support us, including through Hachyderm’s GitHub Sponsors page.

For additional ways to support, including supporting via Nivenly, please take a look at our Funding and Thank You doc.

Is Hachyderm down or just me? Or: I think I’m having a service issue.

To see if Hachyderm itself is up and running, please look at:

To report a full or partial outage, or an issue with a particular feature not working as expected, please use

our Community Issues on GitHub.

Where are all those great incident reports I keep hearing about?

On our blog.

Also: The famous “The Queues ☃️ down in Queueville” Incident Report

I’m new to Mastodon in general. Help?

If you’re new to Mastodon in general, we recommend taking a look at Mastodon.Help’s brief explainer

about what Mastodon is and isn’t, as well as taking a look at fedi.tips for some How To Dos.

As for How to be a Hachydermian, take a look at the docs in our Hachyderm section.

Can I create a non-user account?

Accounts that are not for an individual person’s personal use are referred to as “Specialized Accounts”

and are categorized as corporate, bots, curated, OSS Project, Community Event, and Influencer Accounts.

Some accounts are restricted and/or invite-only and many have rules governing how they can interact with

the platform. Unrecognized account types are suspended. Please read our Account Types documentation

for more information.

Please email us at admin@hachyderm.io.

I have an issue that requires the moderation team, please help.

If the issue is regarding a user or post, please use the report feature in Mastodon. If you need to

reach the mods another way, please look at our Reporting and Communication page.

I would like to know why a user or instance was moderated. How do I reach out and what can I expect?

Due to our size, we do not publicly share moderation information. That said:

- If you are moderator of a different instance and are contacting us about either your

instance or a user on your instance, please email us at admin@hachyderm.io.

- If you are the moderated user and you are not on Hachyderm, please email us

at admin@hachyderm.io.

- If you are the moderated user and you are on Hachyderm, please see our doc for

how to file an Appeal.

If you are not the moderated user and not on a moderation team, please still feel free

to email us at admin@hachyderm.io to confirm if a user or instance

is moderated or not. The main focus of moderation is community safety, so if a specific

user or instance has been temporarily or permanently moderated, then the most common

reason is that we saw an incident or behavioral pattern that we believed posed a risk

to community safety.

I have been moderated and would like to know what to do.

Please take a look at our Moderation Actions and Appeals page.

I would like to know more about how Hachyderm is moderated, so that I can determine if Hachyderm is a safe space for me.

Please start by looking at both our Rule Explainer and our Moderator Covenant.

The main rule of Hachyderm is “Don’t Be A Dick” and the remaining rules explicitly call out common

“whataboutisms”. The Rule Explainer dives into these different facets to provide additional clarity.

The Moderator Covenant is to show how the rules are enforced and what is used to guide our decisions.

The other rules we have are governing the account types permitted on Hachyderm and how

often they can post and how they can interact on the platform.

Who designed the Hachyderm logo?

Our logo was designed by the lovely Ashton Rainbows. (website, Instagram)

Thank you Ashton!

1 - Funding Hachyderm

How to fund Hachyderm and our sponsor list.

🎉 Thank you to all our donors and sponsors! 🎉

Hachyderm is primarily funded by individual Hachydermians either directly to the Hachyderm project

or to Hachyderm’s parent org, the Nivenly Foundation. Although corporate accounts assist with paying

for Hachyderm’s infrastructure costs, they are not the primary source of funding for Hachyderm.

How to donate

One fast, easy way to donate to help finance Hachyderm is directly via Hachyderm’s GitHub

Sponsors page:

Donate

Hachyderm’s GitHub sponsors page has a few benefits, including:

- Sponsor icon on your GitHub profile

- (Optional) A shout out on our #ThankYouThursday on Hachyderm’s Hachyderm account starting in April.

- (Optional) Added to our Thank You list at the bottom of this page starting in April.

For both the shoutouts and Thank You list: we will use your GitHub username by default.

If you would like this changed please either submit a PR or email us at admin@hachyderm.io.

Donation Options

The three ways to support Hachyderm are:

- Donating directly to the Hachyderm project

- Donating to Hachyderm’s parent organization, the Nivenly Foundation

- Purchasing swag

Regular Donations

(… and swag)

Please visit our swag store if you’re looking to update your awesome assortment

of shirts, mugs, stickers, and so on:

The Nivenly Foundation and Membership

The Nivenly Foundation is the parent organization for Hachyderm. The non-profit

co-op itself is being founded over the course of 2023. Currently, Nivenly is a recognized non-profit in

the State of Washington, with upcoming milestones to be completed with the IRS. Please check out Nivenly’s

webpage and Hachyderm account for updates as we

reach different milestones.

As relevant to Hachyderm: since the Nivenly Foundation funds Hachyderm and other projects, this means

that Nivenly donations and memberships also support Hachyderm. The main difference between the paths

of supporting Hachyderm is that Nivenly members take part in member elections and non-member sponsors

and donors do not. Regular donations do not count as or toward memberships, but you can change your

preference from donor to member at any time.

General Nivenly Membership can be purchased through Nivenly’s new Open Collective page.

If you are looking to join Nivenly as a project or trade member, you must email info@nivenly.org.

Thank you everyone!

Our first set of Thank Yous will be added here in April, one month after our March 2023 release.

Updates afterward will be quarterly in June, September, and December 2023. We will update from our

public GitHub Sponsors primarily, as we are treating private GitHub Sponsors, Ko-fi, and Stripe donations

private. If you have sent us a donation via one of these and do not want it to be private, and do want

to be on this page, please contact us at admin@hachyderm.io. The amount

each donor donated will not be listed on this page, name/handle only.

2 - Welcome

New to Hachyderm and Mastodon in general? These docs are for you! For deeper documentation about Mastodon features, please refer to the Mastodon section.

Hello Hachydermians

Hachydermians new and old, and recent migrants from other instances

and platforms: hello and welcome!

This section is devoted to materials that help Hachydermians interact

with each other and on the platform.

Quick FAQs for this section

I’m new to Mastodon and the Fediverse - help?

The two largest external resources we’d recommend are FediTips and Mastodon Help.

FediTips are great for iterating as you become more and more familiar with engaging

on the Fediverse. Mastodon Help is a great “101 Guide” to just get started.

I’m new to Hachyderm - help?

First of all: welcome! We encourage new users to post an introduction post

if and when they feel comfortable and ready. This can also help you engage with the community

here as people follow that hashtag.

Accessible content is important on the Fediverse. The quick asks of our server are outlined

near the top of our Accessible Posting doc. The doc

itself dives into those with more nuance, thus the doc page itself is quite long. We recommend

reading and understanding just the asks when you’re getting started, then coming back once you’re

in a place to dive into the deeper explainations.

Beyond that, you will need to understand our server rules

and permitted account types (if you’re looking to create a non-general

user account). The Rule Explainer doc, like the Accessible Posting doc, has the short

list of rules at the top and then delves into them. Similar to Accessible Posting, we recommend

that you familiarize yourself with the short list first, then read more once you’re in a

place to read the nuance.

What is hashtag etiquette on the Fediverse?

Hashtag casing, and not misusing restricted hashtags, are important

aspects of hashtag use on the Fediverse. For more, read our Hashtag

doc.

What are content warnings and how/when do I use them?

Content warnings are a feature that allows users to click-through to opt-in to

your content rather than be opted-in by default. Our Content Warnings doc

explains this, and how to create an effective content warning, in more detail.

Content warnings are most commonly used for spoilers and for accessibility. For more about

the latter, please read our Accessible Posting doc.

Why are the links in some profiles highlighted and green? What is verification?

Mastodon uses a concept of verification that works like

identity verification (Keybase, KeyOxide). For information about

how you can verify your domains and so on, please see our

Mastodon Verification doc.

The world is on fire, how do I help support myself while enjoying Hachyderm and the Fediverse?

There are many tools that allow you to control what content you

are exposed to and how visible you want your account and posts to

be. For information about these features and more, please see

our Mental Health doc.

2.1 - Accessible Posting

Introduction to posting accessibly for new users.

How to create accessible posts

This documentation page is an introductory guide for about posting

accessibly on Hachyderm. As an introductory guide there are topics

and sections that will need to be added and improved upon over time.

If you are looking for the short version of our asks here on Hachyderm,

please read “What do we mean when we talk about accessibility”,

“what you should know and our asks”, as well as the summary at the end of

the document.

If you are looking for the underlying nuance and context to apply them more

effectively and and take an active part in maintaining

Hachyderm as a safe, active community, please read and reflect on each of the

deeper sections.

What do we mean when we talk about accessibility?

Accessibility means that as many people as possible can

access your content if they choose to. Accessibility does not mean

that you cannot otherwise intentionally gate your content,

for example via a content warning. Rather,

accessibility refers to the many ways that people typically create

unintentional gates around their content.

What you should know and our asks

No one on Hachyderm is expected to be an expert. Everyone on Hachyderm

is asked to approach accessibility with a growth mindset and to iterate

and change over time.

Whenever you receive a request from a group you are not yet

familiar with, or who you do not interact with often enough to have cultural

fluency, please take that request as a growth opportunity. This growth

can happen with sustainable time and effort on your part.

Our asks

When posting accessibly:

- Include effective alt text for images.

- Note you cannot add alt text after posting by editing a post. This includes both creating

new alt text that was neglected or fixing existing alt text. The common work around

is to comment to your post with the alt text.

- Use PascalCase or camelCase for your hashtags.

- Learn how to write effective summaries for audio / video content.

- Prioritize audio content with captions and transcripts where available.

- Be aware how often you post paywalled content; not everyone has the same purchasing power.

- Learn how and when to use effective content warnings.

- When writing posts for an international audience, minimize use of slang

and metaphor and instead use literal, direct, phrasing that can be easily

translated by translation tools.

- When someone makes a mistake regarding any of the above, please either help

them if you have the emotional space to do so or move on. Do not shame them

or sealion them.

Content warnings in particular are a useful feature that applies to many

situations. As a reminder, we request and recommend content warnings

as a general rule as opposed to requiring them. (Please see

this document’s summary for more information about why this is.)

The remainder of this introductory doc page will supply context

and nuance to the above asks. The use of content warnings will come up

heavily for the interpretive section.

Interactions on the internet

Breaking down the different ways that we send, receive, and interpret

content on the internet can help when building an internal framework

for “what is accessible”.

When receiving content on the internet, that content is typically:

- Visual

The text on this page, static or animated images, video - Auditory

Non-visual audio content like podcasts, or audio content with visuals like a video. - Tactile

How we interact with visual content by “clicking here” or otherwise

interacting with the content we are receiving.

- Economic

Content that requires individual purchase or subscription to access.

When sending content on the internet, the content is typically:

- Visual

Same as the above, but something we are sending rather than receiving. - Auditory

Same as the above, but something we are sending rather than receiving. - Tactile

Same as the above, but something we are sending rather than receiving.

When interpreting content on the internet, we are using:

- Our available senses

- Our neurodiversity

- Our lived experiences, including but not limited to our socialization and culture

- Our moral compasses and ethical alignments

- Our primary language(s), spoken and signed

- And so on.

Generating accessible content is the combinatorics problem of the above. Most commonly,

accessibility is implemented by creating a “sensory backup” of the primary delivery of the

content. For example, if the content is audio, it will have a (visual) transcript. If the

content is visual, it will have descriptive text that can be audibly read. And so on.

The interpretation aspect content is where “sensory backups” alone fall short. If

someone is a trauma survivor, having a “sensory backup” of the content does not

solve the particular difficulty they are having. If someone is having sensory overwhelm,

pivoting to a different sense may solve the particular difficulty they are having

but it may also not. To dive into that a little deeper: if the difficulty they are

having is that the web page is visually noisy, having a transcript that deeply

describes all that visual noise and instead makes it auditory doesn’t necessarily

solve the difficulty. In fact, it might not even be desirable.

Mastodon and Hachyderm

For the rest of this article, we will describe the ways that Hachydermians can

begin to maximize the accessibility of their posts within the context of Mastodon.

We will not describe how the Mastodon software itself can be improved.

This is only because that exceeds the scope of this

page and our influence, not because it is unimportant. For those of you who have

ideas for how Mastodon itself can be more accessible, we recommend

making PRs or opening GitHub issues on the Mastodon project repo.

Navigating the complexity

To restate, this an introduction to some of what you will want to learn and internalize

in order to create posts that are more accessible. Note that we didn’t say “posts that

are accessible”, only “posts that are more accessible”. The reason for this is the

scope of humanity is broad, and learning about others is a lifelong journey. Being

truly accessible not only with posts, but with software design and just general life,

is an end goal you should strive to attain even if it can’t be truly achieved.

Sensory

This first set of “things to consider” when you are creating content is based on

the senses we described above that are used when others are receiving the content

you are creating.

Visual

This section will be the longest one and will interplay with other sections below. That is

because a lot of the content on Mastodon is visual in nature, whether it is plain text,

memes, or animated GIFs. Some common examples:

- Images, static and animated

- Videos

- “Fancy Text” and special characters

- Emoji

- Hashtags

The direct asks for each of these:

- Include effective alt text

- Should have a summary, similar to the function of alt text

- Minimize usage of “fancy text” and special characters

- Favor longer, complete emoji names over shorter names

- Use CamelCase

The context

How do people who do not see, or see clearly, the above interact with the content? Typically,

via screen readers. Screen readers are designed to not only read plaintext documents, like this page,

but also to read any text associated with a visual. For images and video:

- Images: will need to have alt text

- Videos: either a summary description and/or full transcript as appropriate

But what about “fancy text”, emoji, and hashtags? In fact, what do we mean by “fancy text”?

“Fancy text” / special characters actually warrant an article of their own, and Scope has a lovely 2021 article titled

How special characters and symbols affect screen reader accessibility.

The article shows how different special character “fonts”, typically used to create italics or other visual

effects, are read by screen readers for those who use them.

The case is similar for emoji. While in the standard emoji set there is associated

text for a screen reader to read, like a thumbs up 👍, when reading the text for a

custom emoji the only text available is the name that is supplied between the colons.

Importantly, this is why here on Hachyderm we favor emoji names like “:verified:” rather

than “:v:”, even though the latter is shorter. When a screen reader encounters the text

“Jayne Cobb :v: :gh:” it will read “Jayne Cobb v g h”. On the other hand when a screen

reader encounters “Jayne Cobb :verified: :github:” it will read “Jayne Cobb verified github”.

One of these is significantly more accessible than the other.

Hashtags is the last heavily used “type” in the visual section. Many screen readers are aware of

and able to read hashtags, but only when they use alternating case (PascalCase, camelCase).

For those unfamiliar, that means that you should use the hashtag #SaturdayCaturday not

#saturdaycaturday. To show the difference,

Belong AU has an excellent 17 second clip showing how screen readers read hashtags.

Audio

Common sources of audio or audiovisual content on social media are:

- Podcasts, recorded messages, and so on.

- Audio video content like YouTube, TikTok, etc.

The asks for these:

- When the content is your own, please have a transcript or similar available.

- When the content is not your own, please favor content that has a transcript for longer

content as often as possible.

- In either case, when posting the content include a short summary (similar to the function of alt text).

The context

Due to the sizes of audio files in posts, most audio content, or audiovisual content in the case

of video, is not hosted on Hachyderm. Linked content comes from various news pages, podcast pages,

Twitch streams, YouTube, TikTok, and so on. Unless you are the streamer, this also means that you

don’t have as much control over how the content is displayed or rendered, as you would for embedding

a GIF or meme (with the alt text, etc.). For this reason, the biggest ask here is that you summarize

audio or video when you post it, so that someone can get the gist of what is posted even if they

cannot directly use the content. It also helps to start to be aware of what sources have captions

(many video sites offer automated captions) as well as transcriptions. If you would like an example

of a podcast that has a transcript, take a look at any of the episode pages for

PagerDuty’s Page It to the Limit podcast.

Noise

“Noise” in this sense applies to:

- “Too much” audio and/or visual content

- “Too loud” audio and/or visual content

The asks for these:

- Please call out in your post if your linked content fits either of the above.

The context

For an example of what might be generating audiovisual noise, try navigating the internet without

an adblocker or script blocker. Risk of malware aside, there are a lot of audio and/or visual

ads placed all over web pages and there are frequently pop-ups, notifications, and cookie

consent windows as well.

These situations are usually frustrating when you’re trying to navigate the situation as-is, let alone

what happens when you’re trying to convert the page to one particular sense (auditory or visual).

Most of these situations do not apply on the Fediverse directly. They appear when links to other

pages and content. To be clear, on Hachyderm we do not ask you to be responsible for the entirety

of the internet. That said, if you are posting content that might be “noisy”, it might be worth

mentioning in your post that supplies a link.

Interpretive

Interpretive accessibility is about how our minds understand presented information. This is a very

broad set of topics as our minds use a lot of data to process information. As an introduction,

some common areas to consider for making interpretation more access are listed below.

Almost exclusively, the ask for assisting with accessible interpretation is:

Since the ask is almost always the same, unlike the above section this section will not have

a “common examples” and “direct asks” pattern. As an introduction we’re

calling out some of the most common barriers to interpretation, offering a suggestion to handle,

and reminding everyone that we do not request or require anyone to become experts. Our main ask

is that you continue to learn and grow in awareness.

Neurodiverse

Neurodiversity is the umbrella term for “the range of differences in individual brain function

and behavioral traits, regarded as part of the normal variation in the human population”. (Oxford

Dictionary) A few common attributes that are part of neurodiversity are:

- ADHD

- Dyslexia, Dyscalculia

- Autism / Spectrum

There are more than these. The main underlying factors that define different aspects of neurodiversity

are things like verbal / written skills, hyper/hypofocus, sensory interpretation (e.g. overwhelm when there’s

too much sensory input), mental visualization, and so on.

The Web Content Accessibility Guide article on Digital Accessibility and Neurodiversity

has some excellent tips on the software design level that can also help you build your mental model

while interacting with others.

Within the context of Mastodon, these will usually come up via links to shared content rather than

anything hosted on the platform itself. That means that what you can do is include the relevant information

when you are posting a link to other content. This can either be via a description in the post itself

or, where relevant, crafting a content warning for the post.

Medical

One of the most common medical conditions that can cause issues with audiovisual content is seizures.

Photosensitive seizures can be triggered by strobing, flickering, and similar visual effects.

This would only come up if/when a user posted an animated image or video that contained effects similar to

these. If you would like to read about this in more depth, please take a look at

Mozilla’s Web accessibility for seizures and physical reactions page.

The other two primary medical conditions that come up when interacting with social

media are eating disorders and addictions. The former can be triggered by images of food,

discussions of weight gain or loss, and so forth. The latter can be triggered by images

and discussion around any addictive substance, which includes but is not limited to:

food, alcohol, various recreational drugs, and gambling.

We do not ask that Hachydermians be medical experts in order to interact on the platform.

The main ask to be aware of situations like these and use content warnings when posting

content that might be triggering to these groups.

Traumas and phobias

Trauma is a very broad category, and the nuance of what can trigger trauma varies between

individuals. That said, there are some common examples of posting patterns that can be

assumed to be generally traumatic:

- If posting about trauma to an individual member of a community, either via a news cycle

or personal experience, in all likelihood the trauma for the collective group will be triggered.

- If posting about any sort of violence, it can be assumed to be traumatic even to those

who have never experienced that type of violence. This includes various forms of violent

trauma humans can inflict on each other as well as animal abuse and abuse to our environment.

- If posting about wealth and poverty, and the topics in-between, it can be assumed that

this will trigger the trauma of the many who have had to interact with economic systems

from a place of disadvantage.

There are many more traumas than these. There are also common phobias that humans have

where the response patterns in the mind and body very directly mirror what happens in

a traumatized person that has been triggered. Common categories of phobias include:

- Death

- Disease

- Enclosed spaces

- Heights

We do not ask that Hachydermians be experts in trauma and phobias in order to interact

on the platform. We do ask that users use content warnings when discussing heavy topics

like the above. This is because, while there is a lot to be gained from discussion, those

most impacted will see the same traumatic conversations over and over again. Especially if

it’s the Topic Du Jour (or week) or something has happened in a recent news cycle to prompt

many simultaneous discussions.

Language accessibility and ease of translation

The main goal here is to ensure that both plain text and text descriptions of media

are copy/pasteable so they can be translated into a different language than they were

composed in. This allows users that may not be fluent, or fluent enough, in the language

the text was written in to use translation tools for assistance.

For clarity: we do not expect any individual to be a hyperpolyglot. We do not expect Hachydermians

to post translations of their posts either. What we are asking is for you to be aware of the issue

and to be aware if you are posting something that cannot be copy/pasted into a third party

tool for translation assistance if someone needs to do so.

Some examples:

- Video content with captions in any language: can another language tool be used to translate

the captions and/or does the video host support multiple languages for their captions?

- Video content with transcript: can that transcript be copy/pasted into a translation tool?

- Plain-text post: can the post be copy/pasted into a translation tool?

- Slang: most regional slang doesn’t translate well when using tools. If you’re making a post that

you want others to be able to easily translate, minimize the use of slang.

Economic

Within the context of Mastodon, this appears when posts are made that link to paywalled content.

The paywall may be a direct purchase for that specific piece of content or the content is hosted

by an entity that requires a subscription to access.

From an accessibility and equity mindset: while people should be paid for their work, it is important

to remember that not everyone can pay for the access to that work. They may be disadvantaged overall,

or may live outside the country or countries that are allowed to pay for access to it.

Another common pattern is for user data to be a type of payment. In this situation, someone must

typically supply their email and some demographic information for free (as in currency) access

to the content. Similar to the above, this can be an accessibility issue for those who have reason

to only share their information cautiously. This is especially in light of increasingly common

data breaches, where supplied data can be used to target individuals and groups.

Here on Hachyderm we do not moderate you for posting paywalled content. Within the context of

accessibility, we ask that you are aware (and call out) when you do and that you manage what

you choose to share with care.

Summary

The length of this particular document should tell you that being accessible requires time and effort.

As only an intro guide, it should also tell you that there is a lot happening on our biodiverse sphere.

Diversity is one of the primary reasons we request, not require, use of content warnings in most

cases. This is because there are many ways two or more groups may be in a state of genuine conflict

without anyone being in the wrong. One quick example could be if someone was posting about weight loss

or gain as a response to recovery from a medical issue that triggered someone else’s eating disorder.

Another might be someone who needs to scream about how transphobia hurts them, while someone else needs to not

be reminded that’s still happening today.

Hachyderm needs to be able to accommodate all of these situations and more. To do so, we try to

create space for disparate needs to co-exist. For situations where instance-level policy wouldn’t be

beneficial to the community, we ask individuals to create and maintain their personal boundaries

in a public space. We also ask everyone to use common keywords and hashtags

so that those who are looking to filter that content can do so easily. As always, please report

malicious and manipulative individual users and instances to the moderation team.

As you learn and grow you may want to help others as well. This is great! Remember to do so only

when you have the emotional space to help with grace. Different people are at different stages in

different journeys, which means that the person who you are frustrated with for not understanding

one facet of accessibility might be very adept with a facet you know very little of.

If you run into situations where your needs and another’s come into a state of conflict, please

approach each other with compassion and respect. Please also remember that you can always walk away from

disrespectful conversations for any reason. If the other person does not respect your boundaries and/or

the space you are creating for yourself, you can also request moderator intervention by sending us

a report.

2.2 - Content Warnings

How to use content warnings on Hachyderm.

This document describes how to use content warnings and how they are

moderated. To understand the feature itself, please look

at our content warning feature doc.

In this document:

What is a content warning?

Content warnings are the text that displays first instead of the text and/or

media that you have included in your post. Since the goal of the content warning

is to put a buffer between a passerby and your underlying content, it is important

to have something that is descriptive enough to be helpful for someone to

decide if they should click through the content warning or not.

When to use content warnings

The two main use cases for content warnings:

- A content warning should be used to protect the psychological safety of

others in a responsible way

- Spoilers

A central concept to content warnings is “opt-in”. By using a content

warning, you are creating a situation where other users are opted-out

of the detail of your post by default. They must consent to opt-in.

In an ideal scenario, other users are able to make an informed decision

to opt-in, i.e. informed consent.

Common examples of content warnings

There are a few situations where content warnings are commonly used on the

Fediverse (these are not exclusive to Hachyderm):

- Images of faces with eye contact

- Images of food and alcohol, even if they are not being consumed in excess

- Though they will also be behind a content warning if they are

- Text or media describing or showcasing violence or weapons (including but not limited to guns, knives, and swords)

- This is common when discussing news around war violence or shootings

- Also common when sharing stories of personal trauma

- This is also common when sharing news about personal safety, domestic abuse, or sexual assault

- NSFW content

- Fandom-specific spoilers for various forms of entertainment like TV shows, movies, and books.

Content warnings feature heavily in our Accessible Posting doc,

especially in the Interpretive Accessibility sections.

How to structure a good content warning

The goal of a content warning is to communicate what another person needs

to know, so they can determine if clicking through the warning is something

they want to do or should be doing, especially if the content is describing

a situation that may be a traumatic, lived, experience or news event. The

question you should ask yourself when you have written your content warning is:

If I was a user that did not want to engage with this post, would

I know to avoid this post with the information provided?

The follow-up question you then need to ask is:

If I was a user that did not want to engage with this post, but did

by mistake and/or an unclear content warning, what is the impact?

Quick example

Before going further, take a look at these two options to be used as a

content warning for the same post about the first episode of the most recent

season of The Mandalorian:

- “Spoilers”

- “Spoilers for the New Season of The Mandalorian”

Which of these two content warnings let you know if you want to interact with this

post from the prospective of:

- A non-Star Wars fan

- A fan of The Mandalorian who does not care about spoilers

- A fan of The Mandalorian who does care about spoilers

Also: what is the impact to each of these groups if they click through your content

warning and see content they did not want to see?

Remember: The goal is for the content warning text to be descriptive enough that

all of these prospective audiences can decide to opt-in or opt-out. The impact in

this example is intentionally low, someone will see spoilers, but there are

situations where the impact will be higher.

“Spoilers, Sweetie”

(Quote attributed to River Song of Doctor Who fame.)

Preventing the spread of spoilers is an excellent “off label” use of the

content warning feature. Spoilers most commonly refers to current books,

television, and movies. One quick, easy example would be using a content

warning when discussing Game of Thrones when it was actively airing.

Another would be discussing the results of a football / soccer match that

people maybe haven’t been able to catch up on yet. Different fandoms have

different expectations around what should be a protected spoiler or not,

so the general rule of thumb here is to treat other fans the way you’d

want to be treated.

As for moderation, we do not moderate spoiler tags. That said, and as always,

please Don’t Be A Dick.

Protecting Psychological Safety

This topic is covered second because it requires a lot of explanation

for the implementation details. In fact, protecting psychological safety

is a life-long journey and involves understanding others unlike yourself.

The short version of when to use this content warning:

You should use a content warning whenever the psychologically safest

option is to opt into a conversation rather than to default into that

conversation.

Let’s start with a hopefully clear example. If you want to share news

about human rights abuses around the world, you will likely

be sharing links and media that shows the reality of those situations.

This could include a lot of violent and traumatic video and images. Due to

the need to raise awareness, you may want to make sure that people can

see the content to avoid turning a blind eye.

That said: you cannot control the reach of your information.

This means if you do not use a content warning, not only will the people

you wish would pay attention potentially see it (or not, depending on how

their home instance federates), but you could also be exposing victims

of that same violence to content that triggers their trauma. So what’s the

psychologically safest option? To protect the most vulnerable, in this case

the people with trauma, and that means use a content warning. The post will

still have the same reach, but it allows people to opt into that conversation.

In these cases it is to your benefit to use clear content warnings,

e.g. “article about the war in Ukraine, includes

images of physical violence in the war zone”. A content warning that is clear

like this serves the dual purpose of labeling the topic of the post

while also explaining why it is behind a content warning.

Similar cases to the above would be any posts that include text

descriptions of, or images and/or video of, violent actions. To be clear:

our definition of violence includes physical, psychological, or sexual

violence. Animal abuse also counts as violence.

(For more clarification on the various rules, like

“No violence”, please see our Rule Explainer.)

Composing content warnings for psychological safety

Being conscientious about composing a content warning that is intended to

preserve the psychological safety of others is important. The impact

is significantly higher than if someone sees a spoiler before they have a chance to

experience the “unspoiled” content.

When you are composing this type of content warning, ask yourself two

questions:

- Why am I putting this behind a content warning?

- What differs between this and other posts about this topic that

I would not put behind a content warning?

The answer to your first question will likely be short. “It’s about war”,

“it’s about yet another shooting”, “domestic violence”, “eating disorders”,

and so forth.

The answer to the second question is what will provide the nuance.

In the example near the top of the post, it is the difference between “it’s

a spoiler” and “it’s about the latest episode of The Mandalorian”. For

psychological safety, you might find yourself providing answers like:

- 1 ) It’s about the war in Ukraine

2 ) It shows images of people in the aftermath of an explosion.- Example content warning text:

“Ukraine War - images and video of bomb injuries and death”

- 1 ) It’s about another shooting in the US

2 ) It was at a school and children are scared and crying in the

images / video in the news report.- Example content warning text:

“School Shooting - images / video of traumatized children but no injuries shown”

Asking the questions in this way allows you to supply the broad topic

as well as describe the nuance that informed your decision to put the

post behind a content warning, especially if you wouldn’t necessarily

do so for the broad topic area by itself.

Nuance and growth

Areas that might take more learning and growth to understand and adopt

are normally those that involve understanding intersectionality of users

on the platform. For example, you may see people use content warnings on

images with faces (especially with eye contact) or over food. With these

specific examples, using a content warning for faces and eye contact is to

help neurodiverse users on the platform who struggle with these and have

strong adverse reactions to it, and for the latter it can be to help those

with eating disorders or who are recovering. (Or similar for images of

alcohol for users who struggle / are recovering from alcohol addiction.)

For taking an active part in keeping Hachyderm a psychologically safe

place to be, it is important to do the work of understanding others

and apply that knowledge to how you interact with each other. There is

a lot to be said here, and it would exceed this document’s scope, but a good place

to start is learning about anti-racism, accessibility, and anti-ableism.

What Do the Moderators Enforce

Succinctly:

- Moderators will not take moderation action against spoilers, but

it really is in poor form to openly share spoilers.

- Moderators will protect the psychological safety of users and prioritize

the most vulnerable.

- Moderators will not, in general, take moderator action due to content warnings (or their lack).

- To rephrase: moderators will request and recommend effective ways to use content

warnings as opposed to requiring them.

- Any exceptions to this are called out on a case-by-case basis.

- Using a content warning as a workaround is not actually a workaround.

- This means that rule violations are not less severe or otherwise

mitigated by putting the offending content behind a content warning.

2.3 - Hashtags on the Fediverse and Hachyderm

How to use hashtags on the Fediverse, including reserved hashtags.

Hashtags are useful ways of connecting with other users. When users

follow hashtags they can see posts that might otherwise be buried

in the fire hose of their local and/or federated timelines.

If you looking for some popular hashtags to follow, please

scroll down to the Popular Hashtags section

at the bottom. Note that some of the popular hashtags are

reserved. To understand what that means, please read the

Don’t misuse reserved hashtags section.

Hashtag Etiquette

How to have good hashtag etiquette on Hachyderm and the Fediverse:

Just like living languages, hashtags change. Cultural reference to a meaning

or phrase may shift over time, may increase or decrease in usage, or may just

be an inline joke in the post. Noting which hashtags are commonly associated

with post topics, either specific or broad, and using them can help others

who are either opting in or out of the type of content you are posting. (See

filtering content on our mental health doc). As a quick

example, someone looking to connect with other fountain pen users might follow

hashtags like pen, ink, or FountainPen. Likewise, someone who needs to filter

out food and/or drink related content for any reason, might filter out hashtags

like food, drink, DiningOut, and so forth.

You should always use an alternating case pattern, like PascalCase or camelCase,

for your hashtags so that screen readers can read them

properly. (Please see our Accessible Posts doc.) Changing

the case will not change the posts that are attributed to the tag. This means

whether someone is filtering in or out a specific tag, the same posts will be

displayed or hidden.

If you are using a hashtag and a non-cased option is offered to autocomplete,

please complete with the correct casing. This is because Mastodon will offer the most

common tag or tags associated with what you are writing. The more users manually

type the cased version, the sooner the cased tag is offered for autocomplete.

That said, one of the big reasons that we do not moderate for tag casing is

because of this feature: users will frequently not catch that their tags have

been overwritten while composing their post.

For completely new tags the tag is stored with the case you compose it with. This is when

you should take additional care: the next person who types the tag after you

will be presented to autocomplete the version you have typed (as the only one

that exists).

It’s reader discretion for how many tags are “too much”. One or two will likely

be fine, and having every word be a separate hashtag is a chore to read. As a

loose rule, around five tags every two paragraphs should be a reasonable “upper bound”.

We do not, in general, moderate hashtag volume in a post. That said, posts with a high

number of hashtags may be reported and moderated as spam.

What to know about reserved hashtags:

- Can only be used for their stated purpose.

- Can be restricted across the Fediverse or instance-specific.

- Misuse of reserved hashtags may warrant moderator action, this will

be on a case-by-case basis.

This means that these reserved hashtags cannot be used the same as general

purpose hashtags. For example, you can include #cats and #caturday on any

cat-relevant post you wish. You cannot use #FediBlock for any

other reason than to notify other instance admins of a malicious instance

or user.

In order for hashtags to be considered reserved in the Fediverse context, they

must be shown to be:

- Used across the Fediverse

- Extremely limited or narrow in scope

Due to the ever-changing nature of hashtags, we will not track and moderate

all Fediverse reserved hashtags, only an extremely limited subset of them.

That said, if someone has notified you that you’ve misused a hashtag

that is intended for a specific use, please remove it from your post

whether it is on this list or not.

The following are the reserved hashtags that we will moderate for misuse. In

every case, misuse is “any use of the hashtag other than how it specified below”.

- FediBlock

- Should only be used for updating the Fediverse about users and/or

instance domains that need to be suspended via the instance admin tools

or for directly responding to a thread/discussion about a reported

user or instance.

- This tag is followed by instance admins across the Fediverse and as such

is not limited to Hachyderm or even Mastodon instances in general.

- Existing posts using the hashtag can be used as a guide for what details

to include in your post.

- As a general rule, you’ll want to include the user or domain, the reason why,

and screenshots to substantiate. The use of screenshots may vary,

though, depending on the nature of the content being reported.

- FediHire

- Should only be used for job posting and job seeking.

- This tag is followed by both those posting and applying for jobs across

the Fediverse.

- Existing posts using the hashtag can be used as a guide for what details

to include in your posts.

Similar to the Fediverse reserved hashtags, the following are reserved

on Hachyderm.

- HachyBots

- This tag is required for all bot posts on Hachyderm to allow users

to easily opt in or out of bot content.

- Use of this hashtag for any other purpose will warrant moderator

action.

General use hashtags are “normal use”. This means you have broader discretion

over how/when/where you use them. The general rule here is “have fun, don’t

be confusing”.

If you notice that there are topic areas that you post about relatively

frequently, it is a good idea to become familiar with the hashtags in that

space. This is so that people filtering out content

can do so more effectively.

If you’re new to Hachyderm and/or the Fediverse, here are some high traffic

hashtags you may want to look at, grouped roughly by topic. Note that

general and reserved hashtags are both listed below.

- Hachyderm:

Hachyderm,

Hachydermia,

Hachydermian,

Hachydermians,

HachyJobs,

HachyBots,

ThankYouThursday

- Fediverse:

FediTips,

FediHire,

FediBlock

- Animals:

Caturday,

CatsOfMastodon,

DogsOfMastodon,

MonDog

- Job posting / seeking:

HachyJobs,

FediHire,

Job,

Jobs,

HireMe

- Tech Industry:

Data,

OpenData,

Security,

InfoSec,

OpenSource,

Tech,

Technology,

TwitterDown

- STEM:

OpenScience,

Science,

Climate,

ClimateChange,

ClimateCrisis,

Weather,

Space,

Astronomy

- Arts:

Art,

Writing,

WritingCommunity,

Writer,

Poetry,

PhotoOfTheDay,

FotoMontag

- Humans:

News,

Sustainability,

HumanRights,

ActuallyAutistic,

ActuallyADHD

- Misc:

SilentSunday,

Mosstodon,

LichenSubscribe,

3GoodThings

2.4 - Preserving Your Mental Health

How to use the Mastodon tools help preserve your mental health on the Fediverse.

There are many articles available online about social media, general online

engagement, and mental health. This document is going to focus on a subset

of these and focus on helping you use the Mastodon tools to uphold your

personal needs and boundaries.

Moderation action and individual action

As you navigate the Fediverse, you will frequently run into situations where you need

to decide if a situation can or should be handled by you individually, by the moderation team,

or both. When you are deciding which action(s) to take, the main question you should ask is:

Is the discussion / interaction that I encountered something that I need to avoid

for my own mental health, or is it something that poses a community risk that needs to

be handled by moderation?

A couple examples of discussions or interactions that would have a negative impact on the community:

- Any posts that are supporting or perpetuating racist, homophobic, transphobic, and other *ist / *phobic viewpoints.

- Any interactions where a user or users is targeting an individual or group for stalking and/or harassment.

A couple examples of discussions that can have a positive impact on the community as a whole

but can have a negative impact on individuals:

- Posts sharing and discussing news cycles around individual or state violence,

even if they are condemning that violence.- Individuals and communities that are the current or historic target of that violence may be triggered.

- Posts about individual exposure to, or recovery from, various forms of trauma.

- Individuals who have been exposed to the same, or similar, situations may be triggered.

The above examples are not comprehensive, but do show situations where you may need to protect

your own individual mental health even as communities across the Fediverse discuss and interact

in ways that show collective growth.

Being online is a bit like being in a crowded room or stadium, depending on

the audience size. That means when you go to take a step back, you may need

to use some of the Mastodon tools to help you maintain the space you’re trying

to create for yourself either temporarily or permanently.

Unwanted content

These are the features that Mastodon built and highlighted for dealing with

unwanted content:

- Filtering

- You can filter by hashtag and/or keyword

- You can filter permanently or set an expiration date for the filter

- This feature is most useful when you want to completely opt-in or opt-out of content.

- opt-in: perhaps you want to follow all Caturday posts in a separate panel or follow cat posts generally.

- opt-out: you do not want to be subject to the unpredictable news cycles around events that

impact a group you’re a member of. Even if people are expressing support for you, you just

do not want to see any of it at all ever.

- Other users

- Following/Unfollowing

- Following: explicitly opt-in to another user’s posts, boosts, etc.

- Unfollowing / not following: a passive opt-out. You will still see posts / etc. if a user you are

following interacts with them.

- Muting / Blocking

- Mute: You will not see another user’s posts, boosts, etc. but they can see and interact with yours. (Note:

you will not see them doing so.) Users are not notified when they are muted.

- Block: You will not see another user’s posts, boosts, etc. and they cannot see yours. Users are not

notified when they are blocked, but as your content “disappears” for them most users can tell if

they’re blocked.

- Hiding boosts

- Less commonly used tool, mainly helpful if you feel another account’s boosts are “noisy” but their

content is otherwise fine.

- Other instances

- Block

- This is the only action available to individual users. Moderation tools allow for more nuanced

instance-level implementations including allowing posts but hiding media. (Please see Mastodon’s instance

moderation documentation page for the complete list.)

- When you block another server you will not see any activity from any user on that instance. Instance

admins and other users are not notified when you have blocked their instance.

How to implement all of these features are on Mastodon’s Dealing with unwanted content doc page.

Please refer to the documentation page for the implementation detail for each.

Additional features

There are many more Mastodon features that you can use to help improve your experience of the Fediverse,

in particular in your account profile and preferences settings. These features mostly control the visibility of

your profile, your posts, and the posts of others.

- Limiting how other accounts interact with yours (or not)

- Follow requests

- When enabled, you need to explicitly approve a potential follower. Only approved accounts can follow you.

- Hide your social graph

- When enabled, other accounts cannot see your followers or who you’re following.

- Suggest your account to others.

- Disabled by default. When enabled your account is suggested to others to follow, increasing your visibility.

- DMs, important notes:

- DMs cannot be disabled and you cannot restrict who is able to DM you (e.g. Followers Only).

- When you include a new user to an existing DM, they can see the DM entire DM history. This is more like adding

a new user to a private Slack channel than the way other tools handle DMs by creating a new group thread with no history.

- Limiting post / account visibility (or not)

- Posting privacy

- Can be set to public, followers only, or “unlisted”. The latter means that someone can see your activity by going

to your profile, but cannot see your activity in any of their individual, local, or federating timelines.

- Opting out of search engine indexing

- How media displays

- Can be set to always show, always hide, or to only hide when marked sensitive. If you find that media such as static

and/or animated images, video, etc. have a negative impact on your mental health, you might want to enable Always Hide.

- Disable content warnings

- If you find that content warnings create more barriers than access, you may want to enable “always expand posts marked

with content warnings”.

- Slow mode

- Enabling this feature means you will need to refresh for new posts to be added to the timeline you’re

currently viewing.

The above are all set in your profile and preferences. We have documented how to configure these settings on

our our Mastodon User Profile and Preferences

doc page.

Patterns for a better Fediverse experience

Here we are detailing patterns that you can implement and iterate to improve

your Fediverse experience. The tools that can assist are directly referenced

in each section. For how to implement, please see the docs linked in the above

Mastodon tools at your disposal section.

Control what content you are exposed to

The mental model that helps the most here is to realize that social media by design opts

everyone into all content by default. Additional tooling constrains this default opt

in, either by creating a new default of opt-out or by creating situational opt-outs.

Deciding which opt-outs you want to implement will inform what tools you choose to

use for your benefit.

Proactively filter content

There will be times that you will want and need to filter content. This might be

permanent or temporary.

- Active opt-in

Following keywords and hashtags can provide relief when you need to decompress. Cats, math,

stomatopoda - anything that helps. - Active opt-out

You can filter out keywords and hashtags to either temporarily or permanently remove yourself

from certain content and discussions.

We recommend filtering out words, phrases, and/or hashtags that:

- You do not want to be exposed to, for any reason.

- This includes not wanting to be exposed to messages of support,

which can have the unintended, added consequence of reminding you

why support is needed. “For any reason” is for any reason.

- You do not want to be exposed to even via reference in a content warning.

Filters will filter out posts with content warnings as well.

When you filter out content you will still be able to see other posts from other users

you do not actively block in your follows, local, and federated timelines.

Note that you can choose to filter or block content as a separate choice from

choosing to report it to the mods. We will still see the report.

When changing the default opt-in for media, you can either:

- Opt in to all media

This will display all media, regardless of whether the media is parked as sensitive. - Obscure media marked as sensitive

This will only obscure media if the person posting it marked it as sensitive. - Obscure all media

This will obscure all media, regardless of whether the person posting it marked it

as sensitive.

Please visit the Mastodon preferences doc to show how obscured / hidden media

appears.

We recommend obscuring media:

- If images and other media are a frequent trigger of any kind.

- If you do not want to be exposed to media that you do not explicitly opt-in to.

Control access to your posts and account

The mental model here is: anything you post or include in a public facing space

should be assumed to be public. There are ways to reduce how public it is and

how long the information is available. Tactics here are particularly useful

if you find your account attracting a disproportionate amount of “trolls” or

other types of malicious activity. (You should also report this behavior

to the mods.)

The usual advice about not disclosing personally identifying information for yourself

or others still applies here. Beyond that, you can also:

- Hide your social graph

This will hide your followers and who you’re following. If you have reason to suspect

that someone may use your account to find others to target, you should definitely

enable this feature. - Change the default visibility of your posts

By default all posts are public, but you can limit your posting reach by unlisting your

posts or restricting them to followers only. When you unlist your posts, that means they

are still public and visible if someone navigates to your profile, but your posts will

not display in any local or federated timelines.

Combining “followers only” for your posts and enabling “follow requests” so you can control

who follows you is a way to maximize how private your account is. - Auto-delete posts

This is enabled and configured via the “Automated post deletion” option on the left nav

menu. You can set the threshold from as low as one week to as long as two years. You can

also choose types of posts to exclude from the auto-delete like pinned posts, polls, and

so forth.

Limit how and who can interact with your account as needed

By default, everyone and anyone can follow your account from any other Fediverse instance

that your home instance is federating with. To restrict that behavior, the main feature

is to enable follow requests for your account. This will require you to explicitly approve

everyone who follows your account.

Be aware of how the DM feature is implemented

There is not currently the ability to do much to constrain DMs, so you will need to be

aware of how they work so you can choose how you want to engage with them (or not).

- You cannot currently limit or disable DMs

This means that any account that you have not explicitly blocked can DM you. - When you add someone to a group DM, they see the history of that group DM

This means that the group DM feature functions more like a private Slack / Discord

channel: when you add a new user, they can see the entire history. To change this,

you’ll need to create a new group DM with the new user(s).

Collective action requires a collective

It is common to feel the impulse to respond to content that we see that has a negative

impact on ourselves and/or others. When these engagements go well, it is/was an opportunity

to help others grow on their journey to be better humans. When they do not, however,

they can be a source of stress at best or result in harassment at worst.

Improving the experiences of yourself and others on social media is a form of collective

action. There are many, many articles about maintaining or improving your mental health while

taking part in collective action of some kind.

While the research around self-care in that broader context exceeds the scope of this article,

what everyone should remember is this:

It is important for everyone to do their part to create and maintain safe communities

(and build a better world). The reason this is called collective action is because it

takes more than one person to accomplish group-level and societal changes. As with any

effort to build something better, individuals that are doing their part for others must

also remember to do their part for themselves.

Give yourself permission

Questions you should ask yourself when you are deciding whether or not to engage in a

thread / conversation that has a negative impact on yourself and/or others:

- Do you, specifically, need to engage at this moment or can another member of the

community (collective) do it?

- If you need to be the one that engages, either due to expertise or some other reason,

do you have the capacity to do in the current moment? If not, can you defer until a

time that you do?

Remember:

- The Fediverse is vast and if you see an issue, especially one that the community commonly catches,

it is likely someone else will say / do something.

- Even if you do not have the capacity at the moment and no one else does, protecting your mental

health will ensure your ability to continue to sustainably help others while growing yourself.

Being able to make contributions over long periods of time will almost always yield more and better

results than doing short bursts of high volume.

Relevantly:

- You can leave conversations at any time for any reason. You do not need to justify or inform when

you do, especially if doing so will continue to inflame the situation.

- You can opt-out of any content at any time for any reason. You to not need to justify or inform

when you do, especially if doing so will be negatively impactful to yourself.

- You can also opt-in to content at any time. Any time you feel the need to opt-out of content,

the ability is always there to opt back in when you’re ready.

Opting in and out of topics, especially those that might be personally relevant and soul straining

for you, is a very useful tactic for creating personal boundaries. It limits when and how you are

exposed to that type of content, especially if / when there is a news cycle or other event increasing

the discussion frequency.

Create and maintain interpersonal boundaries

First and foremost: it is not ok for someone to violate your boundaries or for you to

violate someone else’s. To be clear, this section is not referring to stalking and other forms

of harassment. If you are experiencing these, please report it to the moderation team.

The common patterns you’ll see here:

- When someone is asked to stop engaging in a topic of conversation, thread, or with someone else overall and they do not. e.g.:

- “Please don’t talk about TOPIC in this thread.”

- “Please don’t continue to engage with this conversation.”

- “Please don’t continue to engage with me.”

- When a person who is a member of a community posts and clearly identifies an in-group

thread and non-members / out-group persons respond. e.g.:

- “Black Mastodon users…” -> If you are not Black, do not respond.

- “Non-native English speakers…” -> If your native language is English, do not respond.

Both of these patterns involve having a healthy understanding of public spaces and consent.

A conversation thread being on social media does not necessarily mean it is for everyone to

engage with. This concept should feel familiar: if a group is going for a walk and talking

together in a park it does not mean that their walk and conversation is for everyone in that

park at that time. That said, there are also public performances that happen in public

spaces which are intended for everyone. Similar to the examples just provided, there are

frequently communicated boundaries and expectations around private and public engagements

happening in public spaces.

When someone violates your boundaries

If you have asked someone to stop engaging with you and/or your thread, the main tools at

your disposal are:

- Mute the user

They will still be able to follow you and see your posts. They will not be notified they are muted. - Block the user

They will not be able to follow you, see your posts, etc. They will not be notified that they are

blocked, but they will be able to tell that they are blocked. - Report the user

Remember you can always escalate to the moderation team.

Which of these actions you take is entirely up to you. Your account is how you navigate the Fediverse

and you are the one that will need to decide which of these are warranted for the situation you are in.

Note that we do not recommend announcing when you have muted / blocked someone, as that defeats

the purpose of removing yourself from the conflict. There are reasonable exceptions to this involving

the safety of others and/or the overall community. In this case we request that you

report the situation to the mods, whether you post about it or not,

as it is the job of moderation to handle instance-level policy such as this.

When you violate someone else’s boundaries

- Step away / adhere to the request. This is true regardless of who is “in the right”. The

conversation is not effective and should cease.

- Apologize, as appropriate. You will need to use good judgement here and should only apologize

if and when you are not emotionally charged in a way that prevents you from using good judgement.

- Depending on the impact to the person setting the boundary, if they’ve asked you to stop engaging

there are going to be times when an apology results in further corrective action from them

including muting, blocking, or reporting to the moderators.

- If this happens this is not a flaw in them or necessarily a flaw in you. Allow them to meet

their own needs without judgement or further engagement.

- If you are a Hachydermian, refusing to let someone walk away regardless of whether they accepted

or rejected your apology, or if/when you don’t have clarity on whether they’ve accepted or

rejected your apology, is a violation of our Don’t Be A Dick

policy at a minimum.

3 - Volunteering with Hachyderm

So you’re interested in voluntering with Hachyderm?

Hachyderm is looking for volunteers across four teams: Moderation, Infrastructure, and Security. Below you’ll find what each team does and what we’re looking for.

Moderation

At Hachyderm, moderation is mission-critical infrastructure. Our moderators focus on community care, education, and de-escalation rather than reactive enforcement. The goal is about creating conditions where people have improved autonomy over their own experience, and with less hypervigilance, not to control what they see.

We have two tracks for moderation volunteers:

Community Stewardship Supporting Hachydermians in being their best selves and good Fediverse citizens. This includes growth-oriented interventions, conflict de-escalation, documentation, and programs like our Trusted Reporter Program.

Threat Intelligence Proactive, research-based moderation. Identifying harassment campaigns, scams, and abusive federation patterns before they reach our community.

Moderators in both tracks can also contribute to abusability testing the software. This is how we examine the ways that the platform’s legitimate features can be gamed, misused, or exploited for harm. This is distinct from security vulnerability testing; it’s about understanding how normal functionality can be weaponized (e.g. using report systems for targeted harassment, exploiting federation for coordinated attacks). Moderators are well-positioned for this work because they see how features get misused in practice.

Volunteers can contribute to either or both tracks.

We’re especially interested in: For community stewardship, we’re always interested in mental health professionals willing to volunteer as moderators or to assist with policy drafting and review. This helps us build trauma informed moderation practices. For threat intelligence, we’re always interested in people with platform experience as well as experience testing software for human misuses.

Infrastructure

Our infrastructure team keeps Hachyderm running. This means traditional server administration, incluidng: monitoring, maintenance, scaling, and incident response as well as documentation, automation, and building tooling alongside our moderation team to augment or patch existing tools. Functional patches may be pushed upstream.

What infrastructure volunteers do:

- Operations Monitoring system health, responding to incidents, managing backups and disaster recovery, and scaling resources as needed.

- Automation & Configuration Improving deployment processes, maintaining configuration management, and reducing manual toil.

- Documentation Creating and maintaining runbooks, architecture diagrams, and onboarding materials so knowledge isn’t siloed.

- Tooling Collaborating with moderation to build tools that support community stewardship—bridging technical operations and community care.

Technologies we work with / are interested in: Linux (Debian, Arch), systemd, Docker, Grafana, Tailscale, and OAuth technologies like Keycloak. The Mastodon stack includes Ruby on Rails, PostgreSQL, Redis, Sidekiq, Node.js (streaming API), and S3-compatible object storage.

We’re especially interested in: Current or aspiring contributors to Mastodon (Ruby/Rails, React) and/or Bonfire (Elixir/Phoenix).

Security

Hachyderm’s security team works across two tracks: one technology focused, the other human focused. Both are essential to keeping our infrastructure resilient and our members safe.

Infrastructure Security Supporting the security of our servers and services. This includes hardening configurations, monitoring for vulnerabilities, incident response, and abuse testing (identifying technical vulnerabilities that could be exploited). Assisting with finding and patching security vulnerabilities or backlog items on Mastodon or Bonfire is a plus.

Moderation Security R&D of tools that support our moderation team’s threat intelligence and abusability testing work. This bridges technical security expertise with community protection—building capabilities to identify how platform features can be weaponized, and developing tools to detect and respond to harassment campaigns, coordinated attacks, and other threats before they reach our members.

Volunteers can contribute to either or both tracks.

Technologies and skills: Penetration testing, vulnerability assessment, Linux hardening, container security, OAuth/authentication systems, OSINT techniques, scripting (Python, Ruby, shell), and familiarity with ActivityPub or the Mastodon stack.

We’re especially interested in: Security professionals with experience in application security, threat modeling, or building defensive tooling for online communities.

4 - Open Source Infrastructure

Description of our public infrastructure that keeps Hachyderm online.

This page is missing a lot of details - we’ll be publishing more in November/December of 2024.

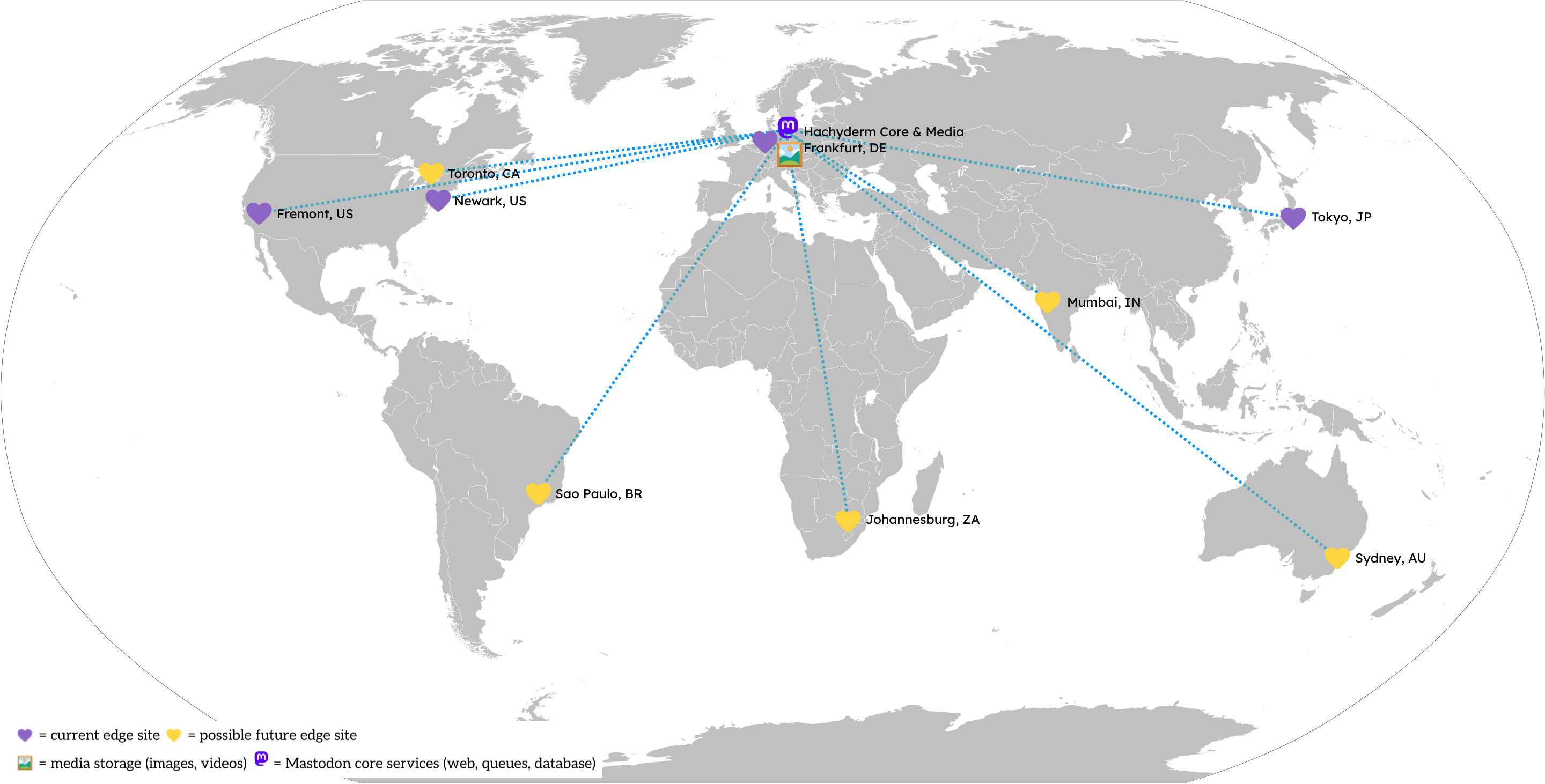

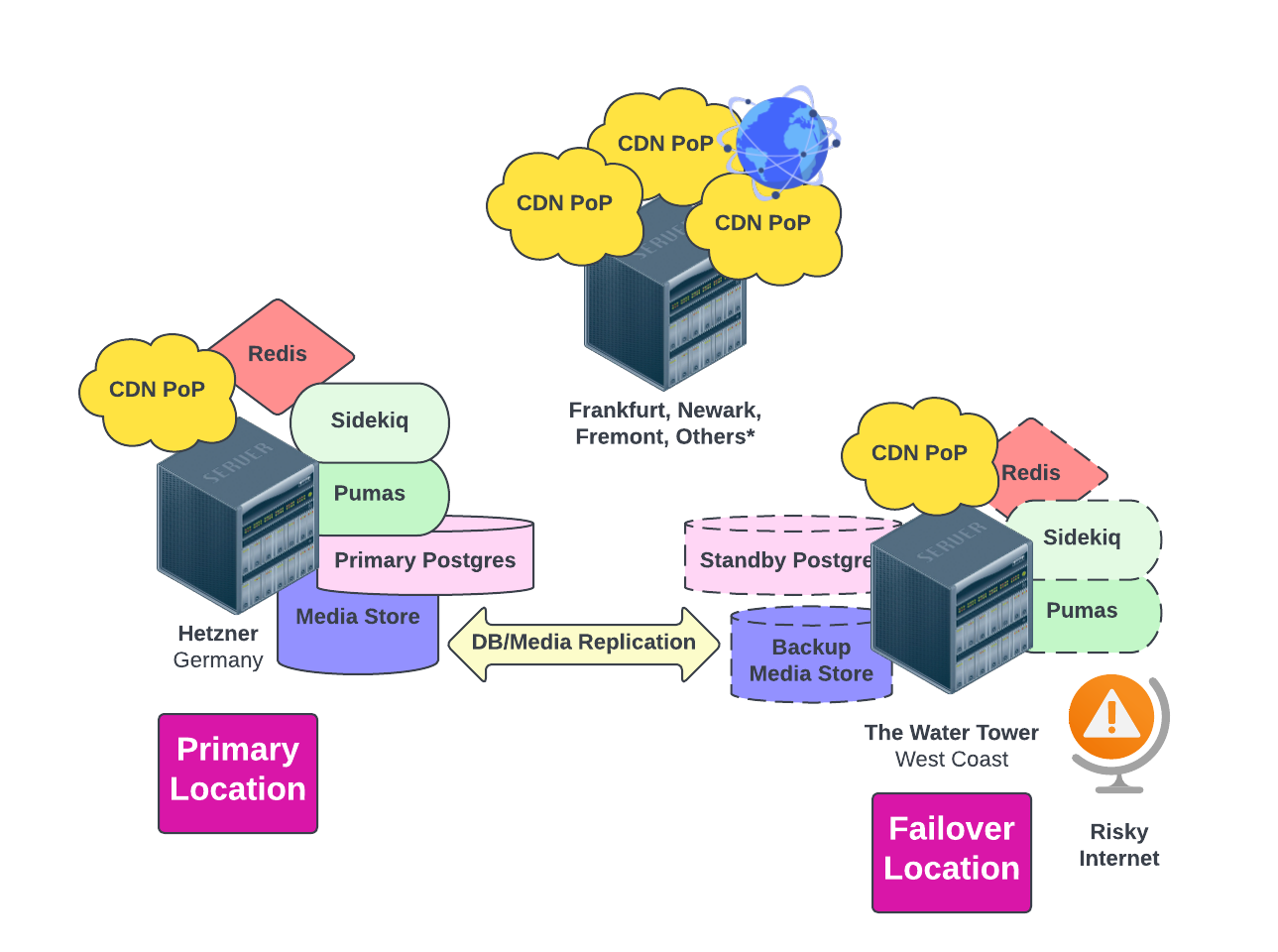

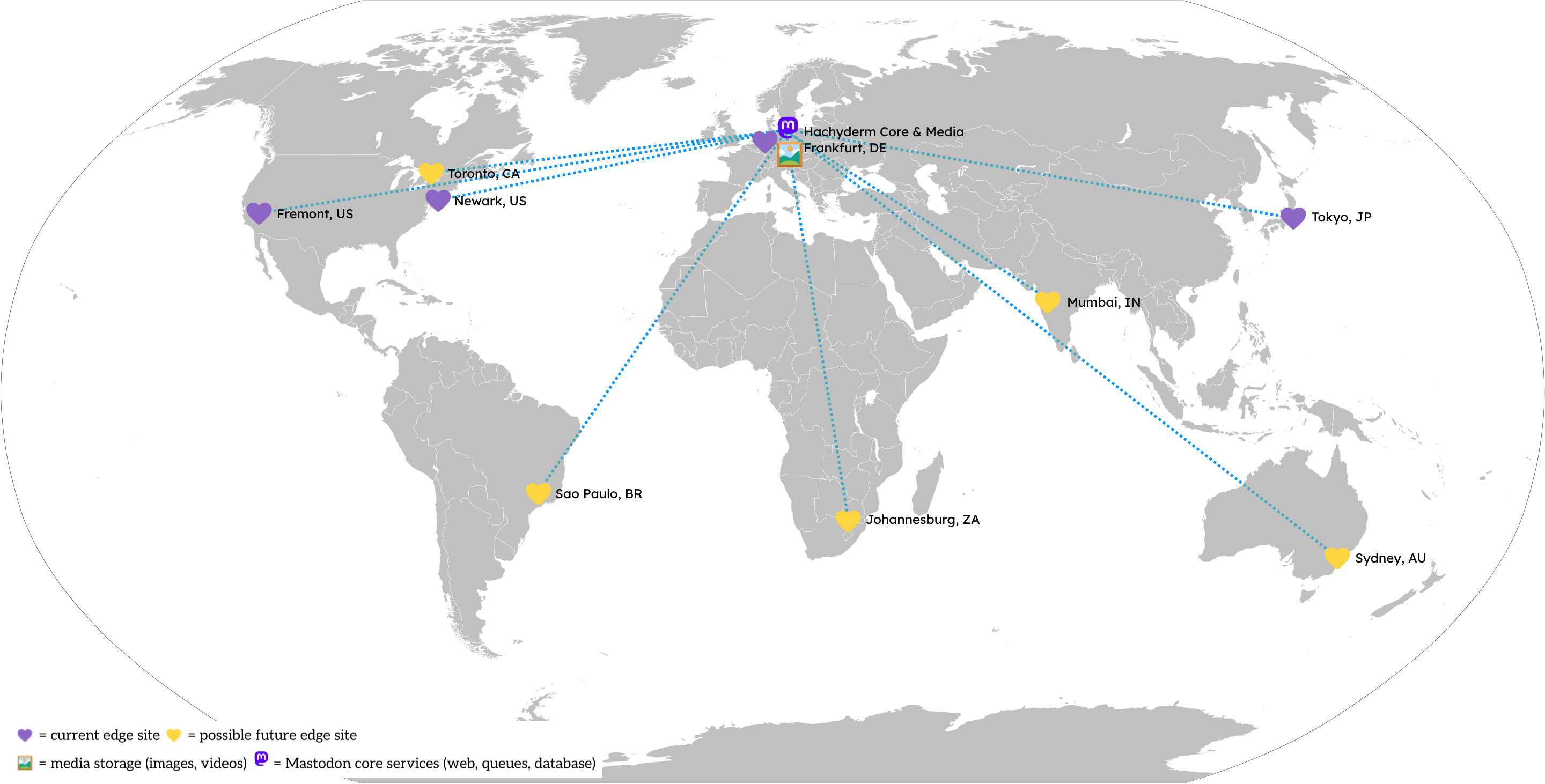

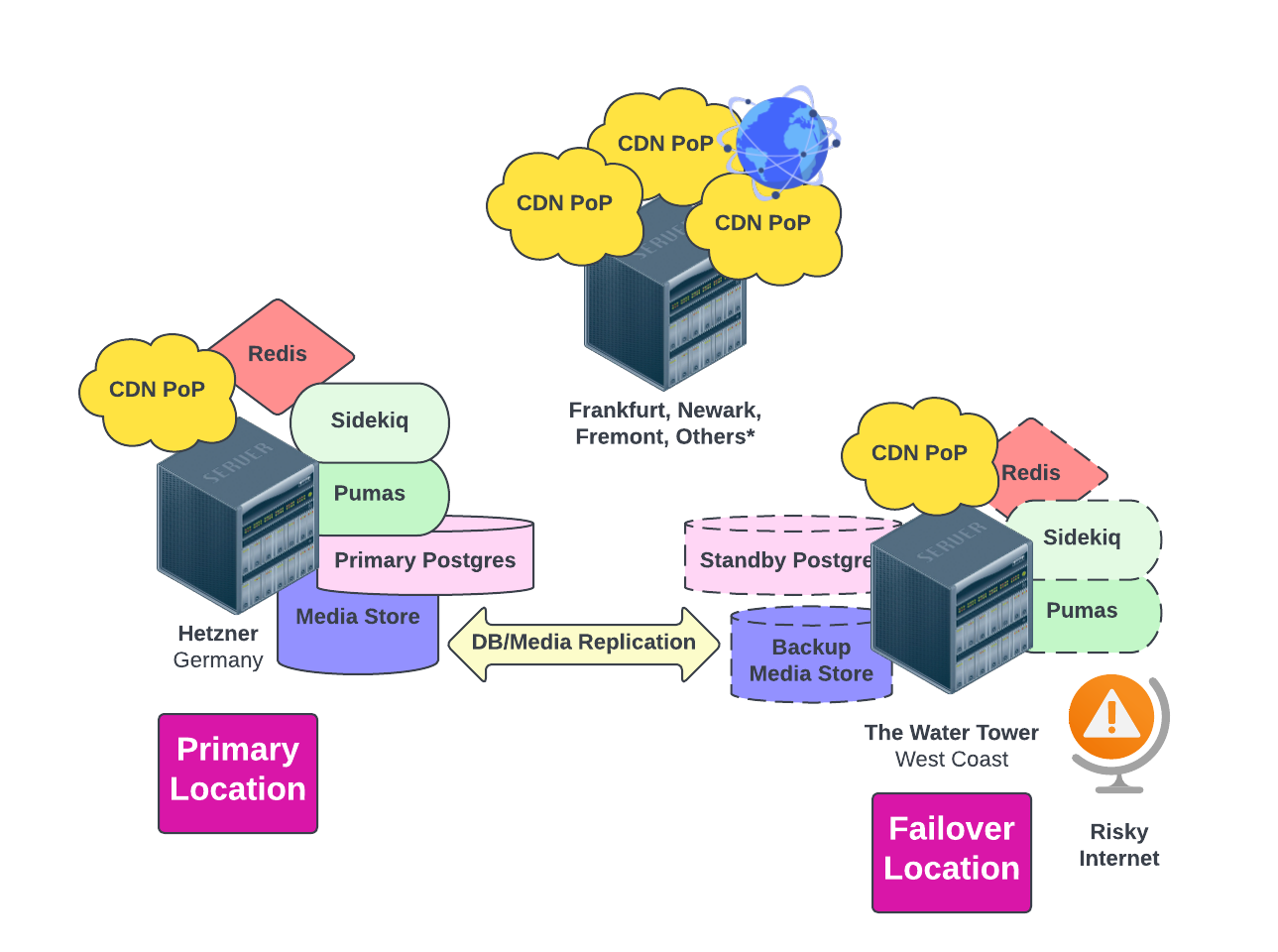

Today Hachyderm runs a multi-cloud, global topology that is distributed across the following service providers:

- Edge node “CDN” - small, lightweight Linode VMs operating around the world. These serve as the front door to Hachyderm and allow us to cache content close to where our users consume it.

- Mastodon Core - physical machines operating in Hetzner in Falkenstein, Germany.

- Media Storage - media (images, videos, audio, etc.) storage hosted in DigitalOcean’s Spaces S3-alike service.

The disparate clouds are linked together using Tailscale to create a resilient, secure global virtual private network.

Components

Key components of our tech stack include:

(Notice we didn’t say Kubernetes – we don’t currently use it, nor plan to!)

Experimenting in Public

Hachyderm deeply believes there is untapped value left in computer science. We intend on approaching our infrastructure as an opportunity for safe and thoughtful experiments, similar to how the International Space Station conducts experiments in orbit.

We intend on prototyping new technology, operational models, SRE organizational structure, follow-the-sun patterns, and open source collaborative workstreams for our infrastructure. In the coming months, we will be sharing ways in which the broader Hachyderm community can volunteer to support our infrastructure, as well as register hypothesis backed experiments to run with our data and our services. We are experimenting on the tools and services that support Hachyderm’s services such as prototyping databases, HTTP(s) servers, and compute runtimes.

… but never for AI

The Hachyderm team will never use your user data in an experiment, including for the purposes of training LLMs/AI models.

To be even more direct: you, as a Hachyderm user will never be leveraged in an experiment. We will not be experimenting on you. All user profile data, direct messages, post content, access metrics, demographic detail, and personal information will never be used in any form of experiment.

Volunteering

If you’re interested in volunteering, read more here.

| Component | Asset Location(s) | Provider(s) & Country | Services Provided |

| Edge CDN | - US - California and New Jersey

- DE - Frankfurt

- JP - Tokyo

- (further expansion planned soon)

| US - Akamai | - Virtual Machines

- Network Backbone

|