This is the multi-page printable view of this section. Click here to print.

Hachyderm Blog

- Announcements

- Pixelfed Vulnerability and Impacts to Federation

- Defederation from Threads

- Threads Update

- Crypto Spam Attacks on Fediverse

- Updating Domain Blocks

- Opening Hachyderm Registrations

- Posts

- A Minute from the Moderators

- A Minute from the Moderators

- A Minute from the Moderators

- A Minute from the Moderators

- A Minute from the Moderators

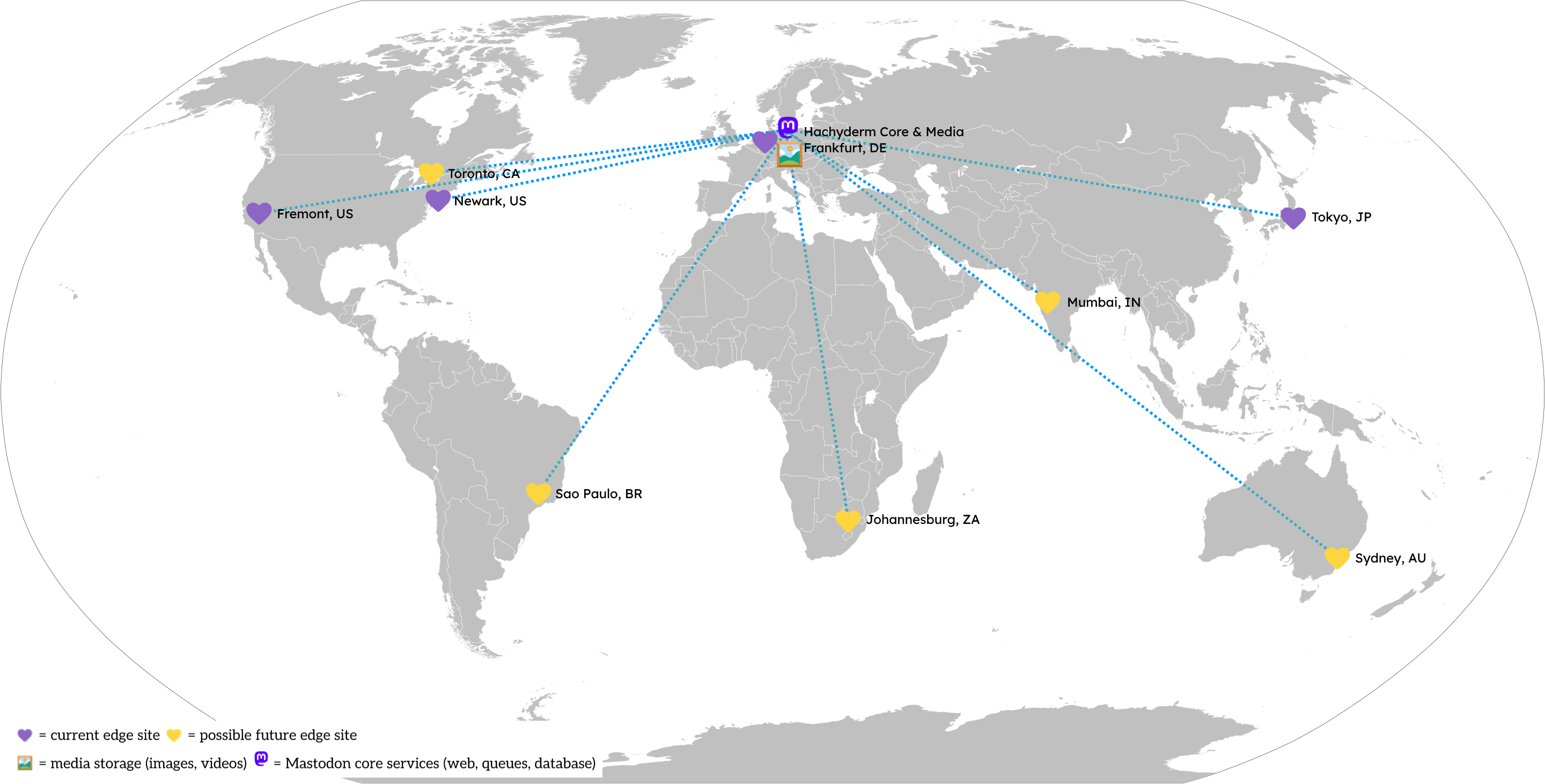

- Ensuring Hachyderm's Future: Improving Safety & Resilience through Strategic Placement of Infrastructure

- Hachyderm's Introduction to Mastodon Moderation: The Report Feature and Moderator Actions

- Hachyderm's Introduction to Mastodon Moderation: Part 1

- MastodonForHarris Hashtag and Mutual Aid Awareness Campaign

- Hachyderm and Nivenly

- The Israel-Palestine War

- A Minute from the Moderators

- A Minute from the Moderators

- A Minute from the Moderators

- Stepping Down From Hachyderm

- A Minute from the Moderators

- A Minute from the Moderators

- Decaf Ko-Fi: Launching GitHub Sponsors et al

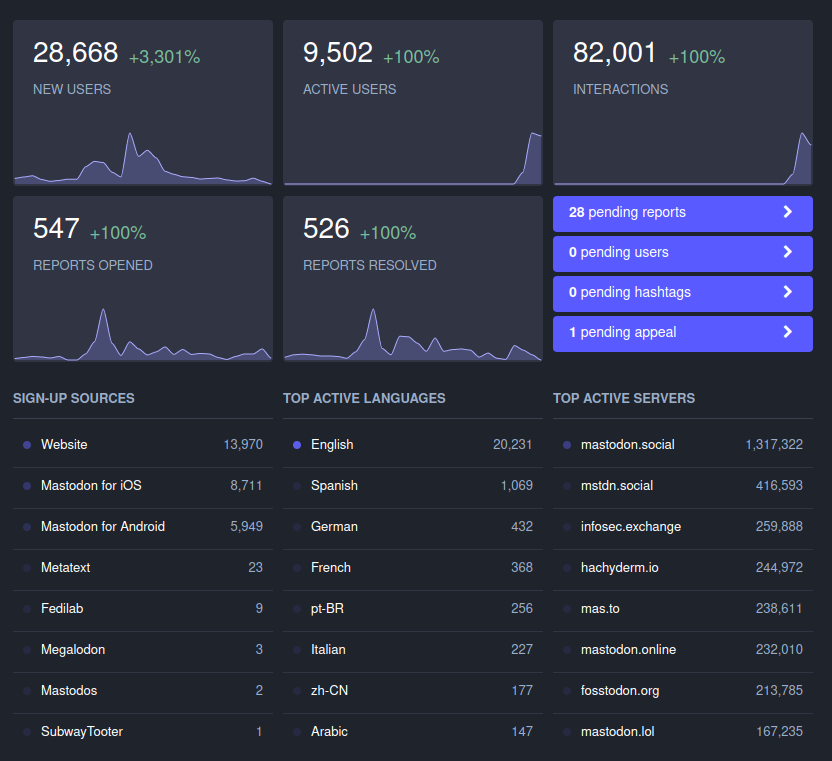

- Growth and Sustainability

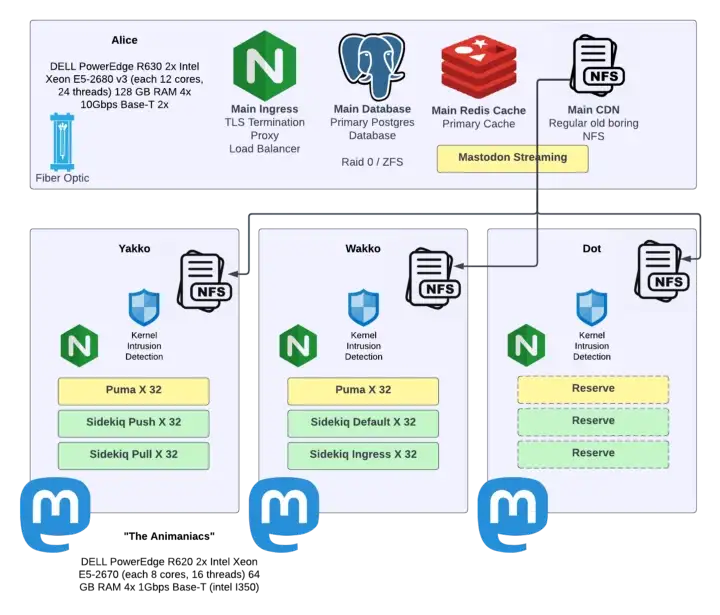

- Leaving the Basement

- Incidents

Announcements

Pixelfed Vulnerability and Impacts to Federation

A recent Pixelfed vulnerability and how it impacts Hachyderm.

If you’re an instance admin and need to reach out to us to refederate, please skip to the “For mods and admins” section (linked on the right nav menu).

Information about the vulnerability

Pixelfed v0.12.5, released 24 Mar 2025, fixes a flaw in how Follows and Followers Only posts were handled by PixelFed. Before this release, private accounts (accounts that require follower approval) could be followed without approval, and any visible post to any user on a Pixelfed instance was accessible to all users on the entire instance. This allowed unintended access to private content. This is true for all instances federating with the Pixelfed instance, not just the instance itself or other Pixelfed instances.

You can read more about this vulnerability here:

- NIST CVE-2025-30741 (Web Archive Link)

- Github Advisory

- Forkus.Cool Blog Post 25 Mar 2025 (Web Archive Link)

- Fediverse Report for 1 April 2025

Why are we talking about Pixelfed and how does this impact Hachyderm?

To restate succinctly:

This vulnerability can expose followers-only posts on all follower-only accounts for all instances using the ActivityPub protocol, regardless of what implementation they are using.

How? The short version.

Understanding the gist of the vulnerability requires understanding a couple of mechanics around followers / following and followers-only posts. For the former, when you “lock” your account or make it “private,” what you are enabling is the ability to manually approve followers. Only once you have approved the follow request is the follower approved and the follower allowed to follow you. (For public accounts approval is automatic unless the account’s instance is otherwise moderated by you or your instance.)

Another part of follow / following mechanics is a process referred to as synchronization. This is the process that makes sure your followers list stays updated. For example, if someone deletes their account, this process removes it from your follower list.

Furthermore, when you make a followers-only post, what is supposed to happen is that post should go to your followers collection only. This means notifying the instances hosting the accounts of your followers to relay that post to accounts specifically in your followers collection.

What was happening with Pixelfed versions 0.12.4 and earlier was that Pixelfed hadn’t correctly implemented account following, which means “locked” / “private” accounts can be followed by any Pixelfed user without explicit approval. This means that posts federated with Pixelfed instances that were marked as “followers-only” were being displayed to accounts that Pixelfed had allowed to follow you, but were not necessarily the followers you intended.

Since Hachyderm is a Mastodon instance, that also means that any instances running Pixelfed v0.12.4 or earlier that we federate with can expose private posts on Hachyderm to these instances, additionally, any pixelfed servers running v0.12.5 may still be exposing followers-only content until Pixelfed implements follower collection synchronization.

What about other ActivityPub implementations?

This same type of vulnerability can happen in other federated software using ActivityPub as well.

This is why you should never post sensitive or highly private content: the privacy of that content is only as strong as the least secure part of the network or software. (The software itself, the server it’s running on, and everything else it needs to run.)

For highly sensitive content, we recommend using services that support end-to-end encryption and limiting who you are sharing it with. Depending on the type of content and how you need to share it, this can include options like Signal or Matrix.

What actions we have taken

Due to the severity of the issue, we have defederated with all Pixelfed instances that are not running version 0.12.5, since versions prior to 0.12.5 allowed for followers that were not approved to access followers-only content.

In addition, since defederation is a silent action (a defederated instance doesn’t receive a notification), we have reached out to impacted instances, where their contact information was available, to explain the situation and how we can resolve and refederate.

Determining the impact

It is always important to make an informed decision when making a server-wide decision as a moderation team. This includes understanding what options are available to you and their impact.

In this case, the only real tool that prevents data exposure on this scale is defederation. All other solutions, like limiting, still allow data to be shared and thus exposed.

Since we knew we were going to need to make multiple defederations, we made sure we knew the full scope of the impact of this action. This is doubly true for this CVE, as we typically prefer to give instance admins time to react to information they receive, and, as many of us in tech know, upgrade paths are not always smooth and straightforward.

For the impact analysis, we gathered the following data:

- How many Pixelfed instances are we federating with?

- What versions of Pixelfed are those instances running?

- How much cross-pollination (followers / following) is occurring on those instances?

As a result of our analysis, we determined that the risk to Hachydermian’s private data was high and the cross-pollination on the impacted instances was very low.

The impact data

Prior to the vulnerability, Hachyderm was federating with 96 instances running Pixelfed. Due to the CVE, we have defederated with 63 of those instances.

(Since this action is due to a security vulnerability, we will refederate with instances that patch the vulnerability. Head to the mods and admins section of this post to learn more.)

A breakdown of the Pixelfed instances we are/were federating with:

- 33 are running 0.12.5 (released 24 Mar 2025)

- 46 are running 0.12.4 (released 8 Nov 2024)

- 17 are running a version earlier than 0.12.4 (0.12.3 was released 1 Jul 2024)

There isn’t a robust way to query Mastodon for how many offline instances it is still trying to federate with, so an unknown percentage of these were already offline with one or more failed retries to connect. To put it another way, it is likely that a number of instances that haven’t been updated “in a long while” were just offline and that information didn’t propagate properly. There just is not a good way to know exactly how many or which. (This is not a bug in Pixelfed, this is common for federating software.)

For the follower / following relationships:

- For 93 of all 96 Pixelfed instances, regardless of software version, there were 5 or fewer following in either direction.

- For 2 of the instances, there were between 5 and 10 following in either direction.

- pixelfed.social is the outlier and outlined in the next section

What about pixelfed.social

Since pixelfed.social is the largest instance, by far, we’re going to show a little more data. To say it first so it isn’t missed:

pixelfed.social is running v0.12.5, so we will not be defederating with pixelfed.social as part of the response to this CVE. The additional information below is only because we provided comparable information, in aggregate, for the other 95 Pixelfed instances.

Now on to the data:

- pixelfed.social is running Pixelfed v0.12.5

- There are 1073 unique Hachyderm accounts following 956 unique pixelfed.social accounts and 864 unique pixelfed.social accounts following 651 unique Hachyderm accounts.

For mods and admins running Pixelfed instances

First and foremost: HugOps. Planning urgent upgrades for CVEs (and related) is stressful and time-consuming, and that is even more true for the volunteer teams across the Fediverse. You’ve got this!

We’ve also got this with you. We’ve done our best to find contact information to reach out to your admins and mod teams depending on what was listed on your instance or documentation if present, to ensure that you were aware of the situation. Due to the volume of instances impacted by this, this process is still ongoing at the time of publishing this blog post.

We’re happy to refederate with any instance that was defederated from as a result of this CVE. As mentioned in our emails as well, please email us once you have upgraded to 0.12.5 and we will refederate with you. You can also respond to the email that we sent out with the same information.

For anyone looking to communicate with Hachyderm moderation in general, please see both our documentation landing page and our Reporting and Communication page.

For fellow Mastodon admins

In the interest of expediency, we published this announcement so that people can see the analysis and impact of our decision. We will publish a separate, shorter, post to include our methods for gathering the above information for the benefit of the broader Mastodon community.

Defederation from Threads

Defederating from Threads

Hello Hachyderm! In alignment with our prior posts about inter-instance federation, and Threads in particular, Hachyderm has defederated with Threads due to their changes in moderation policy as of the publication of this post.

For those interested in learning more about Threads specifically, please read on.

Changes to Meta’s moderation policies and published statements by Mark Zuckerberg

On Tuesday, 7 Jan 2025, Mark Zuckerberg published a series of posts that described changes to Threads moderation practice, and Wired published an article about Meta’s updated moderation (Hateful Conduct) policies. Other articles have been published while we worked on this blog post as the decisions and implications evolve.

In short, Meta updated its Hateful Content policy to explicitly allow harassment for targeted groups and remove earlier protections. We strongly encourage others, especially moderation teams, to look beyond the current version of their Hateful Content policy and also look at the diff between their 2024 and 2025 policies to see what was altered and removed. You can do this by clicking on the Jan 2025 date on the left nav bar.

Threads is now in conflict with Hachyderm’s inter-instance moderation policies

When we consider defederating instances we consider the Hachyderm community, especially vulnerable members. This means that when we evaluate other instances we look at the impacts of those communities on our community.

We initially Limited Threads primarily to make Threads content “opt-in”, as much as Mastodon tools allow, as there are several news sources, government accounts (people and agencies), and so forth on Threads. (Relevantly, among the top followed Threads accounts were POTUS and Barack Obama.) This meant we also committed to manually moderating individual harmful accounts, and interactions with their content, to maintain community safety on Hachyderm while Threads was Limited.

Threads’ recent changes in their moderation policies, both what they’ve put in and what they’ve taken out (read the diff), puts their moderation practices in direct conflict with ours. Essentially, Threads may indeed be large enough that many users are just looking to exist somewhere on social media and are not necessarily de facto fans of Mark Zuckerberg et al., but we anticipate these changes to moderation will shift the user base of Threads in a way that is damaging to the Hachyderm community, so we are defederating from them before that can occur.

Risk and Value Considerations

When we consider defederating, another factor we consider is actual and perceived loss of value to members of the Hachyderm community. Being able to follow and interact with POTUS, local politicians, government agencies, family, friends, etc. from Hachyderm is potentially valuable.

Mid-2024 it seemed likely that Threads would be the social media platform many of those accounts would use or use as their primary social media presence. So it appeared that the long-term value was medium to high, while the slow roll-out of federation allowed us the ability and time to moderate Threads manually with low to low-medium community risk.

Between Meta’s policy changes and the increasing popularity of Bluesky, it seems many individuals are abandoning Threads. It seems likely that politicians and government agencies will or are moving their primary social media presence elsewhere. It now appears that the long-term value is low, and even with the slow roll-out for federation the risk to our community has changed to high.

There’s no perfect “calculation” here, but essentially the long-term risk/reward ratio went from overall medium-high to very low because of how significantly these policy changes increase risk of harm.

What was the data on Threads prior to defederation?

Part of how we handled Threads was meeting the situation where it was at any given time. This is why we were watching their policies, interactions with Hachyderm, news cycles, etc. This is also another reason that we mention here, and in earlier posts, about the slow roll-out of federation. It means that the “instance”, in the federation sense, is significantly smaller than the broader platform. To clarify what this means:

- Not all Threads accounts are federating, in fact most are not.

- Of the Threads accounts that are federating, not all are fully federating.

- In practice, this means that Fediverse users can see and read a user’s Threads posts, the Threads user cannot see Fediverse interactions with their posts. Functionally, this is/was similar to the experience of posting and boosting posts from Twitter bridges.

Specific data:

- There were ~10,000 total Threads accounts federating with Hachyderm.

- There is no way from the data to tell how many of these are fully or partially federating.

- To put this in perspective:

- This is significantly smaller than Mastodon Social (~265k accounts) and approximately the same size as other larger Fedi instances including Mastodon Online (~25k accounts).

- This is approximately 0.0036% of Threads users overall, as Threads has approximately 275 million active users (as of Nov 2024).

- There was one user-generated report regarding a Threads user. In that case it was not about harmful content but a user interaction. There were no other reports sent to Hachyderm moderation about Threads interactions.

To put this in perspective (and acknowledging that these two instances do not have the same moderation policy that Threads now does), we’ve fielded ~500 reports (in total) regarding Mastodon Social and 45 reports (in total) regarding Mastodon Online.

There were 2033 unique Hachyderm accounts following 919 unique Threads accounts and 26 unique Threads accounts following 22 unique Hachyderm accounts.

- For other mods, note that the queries to figure out are not what the Admin Dashboard is running. We’ll put the queries at the bottom of this blog post.

How does this tie into our overall moderation decision? Essentially, the changes to their moderation policy, whether they revert them or not, increase the risk of harm drastically and have a high likelihood of changing the Threads platform account demographics faster than our ability to manually moderate them. In that respect, the decision that we would need to defederate from Threads was made after we reviewed Threads’ current policy and its diff when it was released on Tuesday.

Before enacting the decision, we needed to understand the impact on the Hachyderm community and prepare this statement. Since the queries on the dashboard didn’t provide the level of granularity we needed, Hachyderm moderation and infrastructure coordinated to ensure that we were surfacing the correct data to inform our decision (the last bullet point above and the queries in the next section). This allows us to know how many Hachydermians would be impacted and to what degree. Thank you for your patience in this regard, as due to the significance of the follow/following severances that are occurring we wanted to be accurate in providing this data.

Queries to run to gather the above data

The query to run for “how many unique accounts on my instance are following Threads accounts” is:

Follow.joins(:target_account).merge(Account.where(domain: 'threads.net')).group(:account_id).count.keys.size

Our result in this case is 2033.

The query to run for “how many unique Threads accounts are following accounts on my instance” is:

Follow.joins(:account).merge(Account.where(domain: 'threads.net')).group(:account_id).count.keys.size

Our result in this case is 26.

The query to run for “how many unique accounts on my instance are being followed by Threads accounts” is:

Follow.joins(:account).merge(Account.where(domain: 'threads.net')).group(:target_account_id).count.keys.size

Our result in this case is 22.

The query to run for “how many unique Threads accounts do accounts on my instance follow” is:

Follow.joins(:target_account).merge(Account.where(domain: 'threads.net')).group(:target_account_id).count.keys.size

Our result in this case is 919.

Queries behind the admin dashboard

These numbers are different from what appears in the admin dashboard (instanceDomain.com/admin/instances/threads.net) because the dashboard runs different queries.

The “Their Followers Here” query is:

Follow.joins(:target_account).merge(Account.where(domain: domain)).count

In our dashboard this displayed as 6014.

The “Our Followers There” query is:

Follow.joins(:account).merge(Account.where(domain: 'threads.net')).count

In our dashboard this displayed as 27.

These numbers aren’t deduplicated, so they may not meet your needs when determining how heavily your instance is interacting with Threads.

10 Jan 2025 update: One of the four queries was dropped when we initially published this announcement late Thursday evening. We’ve added the query, as well as taken the opportunity to fix a couple typos and add small clarifiers.

Threads Update

What is Threads?

Threads is an online social media and social networking service operated by Meta Platforms. The app offers users the ability to post and share text, images, and videos, as well as interact with other users’ posts through replies, reposts, and likes. Closely linked to Meta platform Instagram and additionally requiring users to both have an Instagram account and use Threads under the same Instagram handle, the functionality of Threads is similar to X (formerly known as Twitter)1 and Mastodon.

What is the status of their ActivityPub implementation?

As of December 13, 2023, Threads has begun to test their implementation of ActivityPub. As of December 22, 2023, only seven users from Threads are federating with Hachyderm’s instance. For all other users on Threads, we are seeing that the system is not federating correctly due to certificate errors on Threads side. We understand that they are working to resolve those certification issues with assistance from the Mastodon core team.

Based on the available Terms of Use and Supplemental Privacy Policy provided by Meta, they are not selling any of the data they have. This is not official legal or privacy advice for individual users, and we recommend evaluating the linked documents yourself to determine for yourselves.

With regards to the section in the privacy policy

Information From Third Party Services and Users: We collect information about the Third Party Services and Third Party Users who interact with Threads. If you interact with Threads through a Third Party Service (such as by following Threads users, interacting with Threads content, or by allowing Threads users to follow you or interact with your content), we collect information about your third-party account and profile (such as your username, profile picture, and the name and IP address of the Third Party Service on which you are registered), your content (such as when you allow Threads users to follow, like, reshare, or have mentions in your posts), and your interactions (such as when you follow, like, reshare, or have mentions in Threads posts).

It’s important to remember a few things:

- The Mastodon/ActivityPub at their core uses a form of caching of information in order to make the process as seamless as possible. For example, when you create a verified link on your profile, every instance that your profile opens on does its own checks of the links and saves the validation on that third party server. This helps prevent malicious actors from falsifying their verified links that would then trickle out to other instances.

- We don’t transmit user IP’s to any third party instances as part of your interaction. If Meta is able to collect your IP, it would be through a direct interaction with a post on their server or CDN.

How does this impact Hachyderm?

At this point, Threads tests of the ActivityPub do not impact us directly. Based on the available information, they haven’t breached any rules of this instance, they aren’t selling any of the data as discussed above, and the user pool is so limited that even if they did, our team’s ability to moderate that would be quick and decisive. In addition, any users that do want to block Threads at this time, can follow the instructions in the next section to pre-emptively block Threads at their account level.

As a result, we will continue to follow our standard of monitoring each instance on a case by case to see how the situation evolves, and if a time comes that we see Threads federations as a risk to the safety of our users and community, we will defederate at that time.

Indirectly, we know that admins of other instances have expressed that they will defederate with any instances that will continue to federate with Threads. While we hope that the information in this blog post has helped people understand the currently limited risk of continuing to federate with Threads, we also know that other instances have a much more limited set of resources and may need to preemptively defederate with the Threads instance. The beauty of the Fediverse is that each instance has that right and ability.

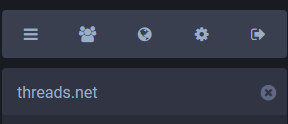

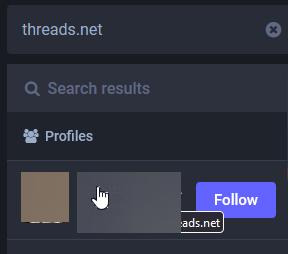

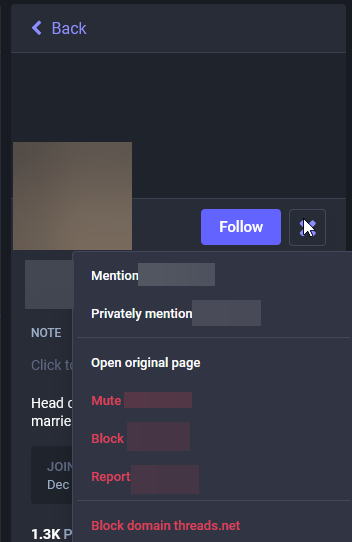

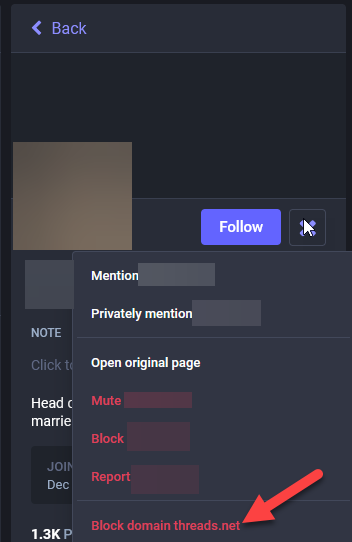

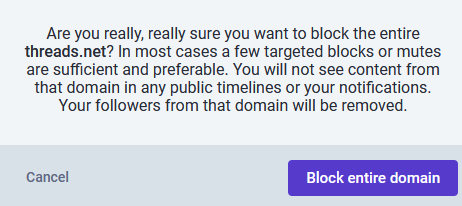

How to block Threads.

- Search for “threads.net” in the search box

- Select a user from the results

- Open the menu from the profile

- Select “Block domain threads.net

- Read the prompt and select your desired action

To understand the ramifications of blocking an instance, please review the Mastodon documentation for details on what happens.

Next Steps

As Threads continues to implement their integration with ActivityPub and the Fediverse at large, we will watch how those users integrate with our community and how their service interacts with our servers. If you would like to learn more about our criteria for how Hachyderm handles federating with other instances, please review our A Minute from the Moderators - July Edition where we list out our criteria.

Crypto Spam Attacks on Fediverse

The Situation

Starting around 8 May 2023, we began to receive reports that Mastodon Social was being inundated with crypto spam.

Initially, it appeared that only Mastodon Social, and then Mastodon World, were impacted. In each case we Limited the instance and made a site-wide announcement. As the issue progressed, it became clear that more instances were being targeted for this same style of crypto spam. As a result, we have decided to change our communication strategy to utilize this blog post as a source for what’s happening and who is being impacted, rather than relying on increasingly frequent site-wide announcements.

As it stands: right now we have seen waves of spam from Mastodon Social, Mastodon World, and now TechHub Social. These waves usually mean that we receive over 100-200 reports in less than a few hours. (By contrast, we usually receive ~20 reports per week.)

What this means for Hachydermians (and Mastodon users in general)

Spam attacks seem to make use of open federation to either find accounts to misuse follow/unfollow behaviors, DMs, comments, and other invasive behaviors. In general, Limiting a server is sufficient for mitigating the impacts of these behaviors. Limiting means that Hachydermian’s posts no longer show up in the Federated feeds of impacted instances, which means that bots can no longer use the Federated feed as a vector for malicious behavior. While this is a good thing and means that these bots will no longer be able to spam Hachydermians, the Limit works both ways. This means:

- The posts for Limited instances will no longer show up on the Federated feed

- You will receive approval requests for all accounts on Limited instances

- User profiles will appear to have been “Hidden by instance moderators”

The UI messages for the latter two are a little difficult at times to determine what it means. Essentially, you will see the same message for a user to follow you from an instance that’s been Limited, and for you to view their profile page, as you would if we had only Limited that specific user.

For users on the impacted instances, these messages should not be taken as the individual user has engaged in any sort of malicious activity. In general, when we see individual-level malicious activity, we suspend federation (block) the individual user rather than Limit them. Instead, these messages are only a consequence of us needing to Limit the servers while they are doing their best to manage the spam attacks they are undergoing.

The impacted instances

We are maintaining the list of instances that we are Limiting as a result of the current crypto spam attack here. Note that this is not all instances we currently have Limited for any reason, only the ones that are experiencing this specific scenario. We will continue to announce when new instances are added to this list via our Hachyderm Hachyderm account and link back to this blog post. Instances that are no longer impacted will be un-Limited and removed from the list below. (When the list is empty, that means that all instances have been un-Limited.)

Updates

Update 25 May 2023 - we’ve been crypto spam free from Mastodon Social and Mastodon World, so we’ve gone ahead an un-Limited those instances.

Update 2 Jun 2023 - we’ve been crypto spam free from TechHub Social, so we’ve gone ahead and un-Limited that instance! That’s the last one, so this incident is resolved.

Updating Domain Blocks

Today we are unblocking x0f.org from our list of suspended instances to federate with.

Hachyderm will begin federating with x0f.org immediately.

Reason for suspending

We believe the original suspension was related to early moderation actions taken earlier in 2022. The moderation actions took place before Hachyderm had a process/policy in place to communicate and provide reasoning for the suspension.

Reason for removing suspension

According to our records, we have no reports on file that constitute a suspension of this domain. The domain was brought to our attention as likely flagged by mistake. After review we have determined that there is no reason to suspend this domain.

A Note On Suspensions

It is important to us to protect Hachyderm’s community and our users. We may not always get this right, and we will often make mistakes. Thank you to our dedicated users for surfacing this (and the other 13 domains) we have removed from our suspension list. Thank you to the broader fediverse for being patient with us as we continue to iterate on our processes in this unprecedented space.

Opening Hachyderm Registrations

Yesterday I made the decision to temporarily close user registrations for the main site: hachyderm.io.

Today I am making the decision to re-open user registrations again for Hachyderm.

Reason for Closing

The primary reason for closing user registrations yesterday was related to the DDoS Security Threat that occurred the morning after our Leaving the Basement migration.

The primary vector that was leveraging Hachyderm infrastructure for perceived malicious use, was creating spam/bot accounts on our system. Out of extreme precaution, we closed signups for roughly 24 hours,

Reason for Opening

Today, Hachyderm does not have a targeted growth or capacity number in mind.

However, what we have observed is that user adoption as dropped substantially compared to November. In my opinion, I believe that we will see substantially less adoption in December than we did in November.

We will be watching closely to validate this hypothesis, and will leverage this announcement page as an official source of truth if our posture changes.

For now we have addressed some more detail on growth, registrations, and sustainability in our Growth and Sustainability blog.

Posts

A Minute from the Moderators

Welcome back to Moderator Minutes. This is Part 2 of our series on Community Care in Online Spaces.

In Part 1, we explored how norms shift, how those shifts create friction, and how the ways we respond to that friction can either build or erode community. We asked you to sit with a question: is it working? And we talked about the difference between reacting and responding, between correcting and connecting.

This month, we’re going one layer deeper. Rather than looking at what we do when friction arises, we want to look at what drives us there in the first place. What is it about seeing something distressing that makes us want to act immediately, and what happens when that impulse meets the realities of navigating online spaces?

A note before we begin: the posts in this series are denser than our usual Moderator Minutes. We’ll be drawing on work from several writers and activists who have spent years thinking about these patterns, and we’ll include a reading list at the end for those who want to go further. We don’t expect anyone to read all of it. We do hope the ideas here give you something useful to sit with.

The Impulse to Act

When we see something awful happening, most of us are moved to act. The worse it is, the stronger the pull. This is not a flaw. It is, in many ways, one of the better things about us. The desire to do something in the face of harm is the engine behind every mutual aid network, every crisis response, every act of solidarity that has ever mattered.

But there is a difference between urgency and intention. Urgency says: something must be done, now. Intention asks: what am I trying to achieve, and will this action get me there? When we act from urgency alone, without pausing to examine our goals, we may not know whether our actions meet them. Worse, we may not recognize when our actions actively prevent them.

This is not a new observation. In 2013, writer and organizer Ngọc Loan Trần published an essay called “Calling IN: A Less Disposable Way of Holding Each Other Accountable.” Trần, a queer, disabled, Việt/mixed-race activist, wrote it after attending a large racial justice conference, a room full of people who shared values, who understood the stakes, who all “got it.” And what Trần observed was that those same people, in that shared space, had lost compassion for each other.

That observation is worth sitting with. It was not a room full of people who didn’t care. It was a room full of people who cared enormously, acting from urgency rather than intention, and in doing so, causing harm to the very community they were trying to protect.

Trần coined the term “calling in” to describe an alternative: accountability rooted in relationship rather than punishment. The idea took hold, and over the following decade, a lineage of writers and practitioners built on it. We will be drawing on several of them throughout this post.

The reason we start here is that this pattern, good people, strong values, urgency without intention, is not limited to conferences or activist circles. It is the pattern we see in our reports. It is the pattern many of us enact in our own timelines. And it is the pattern that, left unexamined, erodes the communities we are trying to sustain.

The Pressure to Do More

The urgency we just described does not exist in a vacuum. It is fed by something deeper: the persistent feeling that whatever we are doing, it is not enough.

Consider an example. Say you have spent your week writing posts to help people: sharing mutual aid links, amplifying crisis response information, sending messages of care to people in vulnerable or targeted communities. You have been doing real, tangible work. Then you log in and see a wave of news, or an online harassment campaign, or another round of something terrible, and the question surfaces: am I doing enough?

Let’s mirror something from Part 1 and sit with our feelings for a moment.

Do you feel like you are doing enough, with what is happening in the world, right now?

Whatever your answer is, stay with it a little longer. Feel your way through why you do, or don’t, feel that you are doing enough.

This is not a rhetorical exercise. How you answer that question shapes how you behave online. When the answer is “no” (and for many of us, the answer is almost always “no”) it creates pressure. That pressure comes from two directions. It comes from inside: the internal monologue that tells us we should be doing more, doing it better, doing it differently. And it comes from outside: from communities, from trusted voices, from the implicit and sometimes explicit message that if you are not visibly fighting, you are complicit.

Both of these pressures can be valid in their origin. But when they compound, they produce a specific and damaging result: we stop being able to see the work we are already doing. The posts we wrote, the care we extended, the quiet labor of showing up consistently, all of it disappears under the weight of what we haven’t done. And once we can no longer see our own contributions, we become desperate to do something that feels visible, immediate, and unmistakable.

In 2016, writer and activist Maisha Z. Johnson named a version of this pattern in her essay “6 Signs Your Call-Out Isn’t Actually About Accountability,” published on Everyday Feminism and later republished by YES! Magazine. Johnson, whose background includes work with Community United Against Violence, the nation’s oldest LGBTQ anti-violence organization, described what happens when holding each other accountable drifts into punishing each other. She drew a distinction between acting out of love for our communities and acting out of fear and pain. The behaviors can look similar from the outside, both involve publicly naming a problem, but they come from different places and produce very different outcomes.

What Johnson was naming is something many of us will recognize if we are honest with ourselves. There are times when we call something out because we have a clear goal: we want a specific behavior to change, and we believe our words will contribute to that change. And there are times when we call something out because we are exhausted, because we are afraid, because the pressure to do something has become unbearable and this is the something that is available to us. The second is not accountability. It is release. And while the release is understandable, it is not the same as the work it replaces.

Where We Feel Power

There is a concept in psychology called “locus of control.” It describes where we believe power over our lives sits, inside ourselves, in our own actions and choices, or outside ourselves, in forces beyond our control. The term comes from the work of psychologist Julian Rotter in the 1950s, and it has been widely studied since.

We want to be careful with this concept, because the traditional framing carries a bias. The conventional view treats an internal locus of control, the belief that your actions shape your outcomes, as the healthier orientation. But this framing was developed within a Western, individualist perspective, and it has a significant blind spot. For people from marginalized and targeted communities, the feeling that external forces control your outcomes is often not a distortion. It is an accurate reading of the situation. Discrimination, structural oppression, and systemic inequity are real forces that genuinely constrain what individual action can achieve. Scholars studying locus of control in the context of marginalized populations have noted that what looks like a “less healthy” external orientation may in fact reflect a realistic assessment of the limitations that racism, discrimination, and socioeconomic conditions impose.

So we are not using this concept to suggest that feeling powerless is itself a problem to fix. For many of us, the feeling of lacking control is grounded in reality, and naming that is important.

What we do want to examine is what happens next. What we do with that feeling.

Here is an analogy. Imagine you volunteer at a soup kitchen. In relatively stable times, the work feels meaningful. You can see the people you are helping. The scale of the need, while real, feels approachable. Your contribution feels like it matters.

Now imagine the same soup kitchen during an extreme crisis, a climate disaster, an economic collapse, a wave of displacement. The lines are longer. The need is greater. The news is relentless. You are doing the same work, or more, but it no longer feels like enough. The scale of the crisis dwarfs your individual contribution, and the gap between what is needed and what you can provide becomes a weight you carry home with you.

The work did not become less valuable. But your relationship to it changed.

This is what happens in online spaces when the world feels like it is on fire. Even if you are showing up consistently, posting resources, supporting others, extending care, the relentless news cycle and the visible enormity of harm can make all of it feel like nothing. And when your sustained, quiet work feels like nothing, the pull toward something louder becomes very strong.

This can lead to an unhealthy relationship with the negative feelings that accumulate. You may not be able to stop an oppressive force. But you can shout about it. You can name and shame. You can find a target and direct your frustration somewhere, anywhere, because the alternative, sitting with the feeling that you cannot individually fix what is broken, is unbearable.

The shift is subtle, and it is worth naming precisely: the goal stops being “change this specific thing” and becomes “make this feeling go away.” That is the moment where the impulse to act stops serving the community and starts serving our own need for relief. And as we discussed in the previous section, that is not accountability. It is release wearing its clothes.

Misdirected Action

Once that shift has happened, once the goal has quietly become relief rather than change, the question of where we direct our energy starts to matter in a different way.

Not all calling out is the same. There are differences in context, in scale, and critically, in the power held by the person on the receiving end. When we are acting from intention, we tend to account for those differences naturally. We think about who we are talking to, what we want to achieve, and whether this specific action in this specific context will move us toward that goal. When we are acting from urgency and exhaustion, those distinctions collapse. Everything feels equally urgent. Everyone feels equally responsible. And the energy has to go somewhere.

Consider this example. A large company does something harmful. The CEO makes a decision that affects thousands of people. You are angry, and that anger is justified. But the CEO is not on your timeline. The person who is on your timeline is the company’s social media manager, posting the corporate line because that is their job. They did not make the decision. They likely have no authority in the hierarchy that produced it. They may privately disagree with it. They need to buy food and pay rent, and this is the job they have.

There is a reason this feels like a distinction without a difference in the moment. When a person speaks, we naturally assume they are speaking with their own voice, that their words represent their own thoughts, their own positions, their own authority. That is how individual speech works. But corporate speech breaks that assumption. The social media manager is voicing something that was shaped by people they may never have met, reflecting decisions they had no part in making. They are a conduit, not a source. And yet, because we hear a person speaking, we instinctively assign them the ownership that comes with speech. The CEO, the social media manager, the customer support agent, all of them register as “the company” in a way that erases the vast differences in their actual authority. This is natural, but it is not accurate. And when we act on it without examining it, we direct real force at people who have no capacity to give us what we want.

If you spend your afternoon arguing with that social media manager, or ten social media managers across ten companies, you have not changed the company’s behavior. You have not reached the decision-maker. You have not decreased the harm. You have worn yourself out, and a person who was not responsible for the harm has absorbed the force of your frustration.

There is also an alternative that is easy to miss. You can call out the company without engaging with its social media account at all. You can post about what the company did, name it clearly, tag a handle or use a hashtag if you want visibility, and then not respond when the company account replies. The social media manager or their team may be tasked with responding to mentions. That does not obligate you to continue the conversation. You have said what you needed to say. You do not owe anyone a thread. Posting about a company and arguing with its lowest-ranking representatives are very different actions, and only one of them preserves your energy for the work that actually matters.

This is not a moral judgment. It is a practical observation. When we stop distinguishing between targets based on their actual power and responsibility, we spread our energy across surfaces that cannot absorb it productively. It feels like fighting. It is not the same as building.

Kai Cheng Thom, a Chinese-Canadian trans woman, writer, social worker, and conflict resolution practitioner, wrote directly about this dynamic in her book I Hope We Choose Love: A Trans Girl’s Notes from the End of the World. Thom observed that in social justice communities, “accountability” had increasingly become a script, a performance of the correct response rather than a genuine path toward repair. The question she posed was pointed: are we more committed to the feeling of calling out than to the work of resolving conflict?

Thom was writing from inside queer and trans communities, about dynamics she had experienced firsthand, and her observation carries a challenge that applies well beyond those communities. If accountability has become a performance, if we are following the script because the script makes us feel like we are doing something, then we need to ask what the performance is replacing. And whether we would be willing to do the harder, quieter, less visible thing instead.

That question is not comfortable. It is also, we believe, necessary. Because if the patterns we have described in this post are recognizable to you, the urgency, the pressure, the powerlessness, the misdirected energy, then you are already familiar with how exhausting they are. You already know that they are not sustainable. And you may already suspect that there is something better available, even if you are not sure what it looks like.

That is what we want to talk about next.

Channeling Energy Constructively

Everything we have described so far, the urgency, the pressure, the collapse of distinctions, the drift from accountability into release, shares a common feature. It is all individual. It is one person, overwhelmed, trying to address systemic harm through individual action, burning through their own reserves in the process.

This is not a coincidence. The patterns we have been describing are, in large part, the result of trying to do collective work alone.

Leah Lakshmi Piepzna-Samarasinha, a disabled queer writer and longtime disability justice activist, offers a different frame. In their book Care Work: Dreaming Disability Justice, Piepzna-Samarasinha describes a concept they call “care webs,” networks of mutual support that are not built in response to a crisis, but maintained as ongoing infrastructure. A care web is not one person showing up heroically in a moment of need. It is a group of people who have already done the work of figuring out who can do what, who needs what, and how they will sustain each other over time.

The distinction matters for what we are talking about here. When you are operating alone, one person, one timeline, one set of reserves, every new crisis draws from the same finite well. The pressure to do more is a pressure on you, personally, and when you cannot meet it, the failure feels personal too. The urgency becomes yours to carry. The powerlessness becomes yours to manage. And the misdirected action we described earlier becomes almost inevitable, because individual urgency demands individual action, and individual action at that scale does not work.

A care web changes the unit of action. Instead of asking “what can I do about this?” you are asking “what can we sustain together?” Instead of measuring your contribution against the scale of the crisis, a measurement that will always come up short, you are measuring it against what your web has agreed it can hold. The soup kitchen does not become less overwhelming because you joined a care web. But your relationship to the overwhelm changes, because you are no longer the only person responsible for responding to it.

This is not abstract. In online spaces, care webs can take forms that many of you will recognize even if you have not used the term. A group of people who coordinate to amplify mutual aid posts so that no single person has to carry the full weight of visibility. A set of friends who check in with each other before responding to a provocative thread, not to police each other but to ask: are you okay? Is this the thing you want to spend your energy on right now? A community that has explicitly discussed what it is building together, so that when the next crisis arrives, its members have a shared framework for deciding how to respond rather than each person reacting alone.

What these examples share is that they move the question from “am I doing enough?” to “are we building something that can sustain us?” That shift does not eliminate the urgency. It does not make the world less frightening. But it changes the relationship between the individual and the work. It makes the quiet, sustained labor, the posts, the check-ins, the mutual aid, the showing up, visible again as contributions to something larger, rather than invisible drops in an endless ocean.

This is what we mean by channeling energy constructively. Not the absence of anger. Not the suppression of urgency. But the practice of directing that energy into structures that can hold it, that can receive it and convert it into something that outlasts the moment. A post about a company’s harmful behavior, written with clarity and shared once, is channeled energy. A thread that spirals into hours of argument with people who have no power to change anything is not. A check-in with someone in your community who you know is struggling is channeled energy. Doomscrolling until you find a target for the feelings you cannot sit with is not.

The difference is not always obvious in the moment. That is why the infrastructure matters more than any individual decision. If you have already built the web, if you have people who will check in with you, if you have a shared understanding of what you are building together, then the moment of crisis is not the moment where you have to figure all of this out alone. You have already done that work. And the work you did counts.

Sitting With It

We started this post by asking you to go one layer deeper, to look not just at how you respond to friction, but at what drives you there. We have covered a lot of ground: the gap between urgency and intention, the pressure that makes our own work invisible to us, the ways powerlessness channels itself into misdirected action, and the difference between acting alone and building something that can sustain us.

We want to close by returning to the question we asked earlier in this post, with a small shift:

Where is your energy going, and is it creating what you want for yourself and your community?

If you have been sitting with that question as you read, you may have noticed something. The answer may not have changed. But the way you are looking at it might have.

If your energy is going toward things that leave you exhausted without moving you closer to what you want, for yourself or for the people around you, that is not a signal to try harder. It may be a signal that you are carrying something alone that was never meant to be carried alone. It may be a signal that your reserves are depleted and that what you need is not more action, but more support. It may be a signal that the structures around you, the care web, the shared understanding, the people who check in, are not yet built, or need tending.

None of that is a failing. All of it is an invitation.

In our next Moderator Minutes, we will be looking at what happens when these ideas meet friction in real time: how to set and hold boundaries in online spaces, and how to de-escalate when things are already moving fast. If this post was about understanding where your energy goes and why, the next will be about protecting it when it matters most.

Our community discussions have moved to Zulip since the last Moderator Minutes. We are still inviting people in by hand, so if you would like to join us, ping us in our Discord and we will get you added.

As always, processes grow over time, and this conversation is no exception.

Further Reading

References

Ngọc Loan Trần, “Calling IN: A Less Disposable Way of Holding Each Other Accountable” (2013). Published on Black Girl Dangerous. Also archived on TransformHarm.org.

Maisha Z. Johnson, “6 Signs Your Call-Out Isn’t Actually About Accountability” (2016). Published on Everyday Feminism. Also republished by YES! Magazine.

Kai Cheng Thom, I Hope We Choose Love: A Trans Girl’s Notes from the End of the World (2019). Arsenal Pulp Press. Here is a review on Plenitude Magazine.

Leah Lakshmi Piepzna-Samarasinha, Care Work: Dreaming Disability Justice (2018). Arsenal Pulp Press. Here is a review on Autostraddle.

Leah Lakshmi Piepzna-Samarasinha and Ejeris Dixon, eds., Beyond Survival: Strategies and Stories from the Transformative Justice Movement (2020). AK Press. Here is a review on Autostraddle.

If you’d like to read more

On calling in and calling out: Loretta Ross has spent the decade since Trần’s essay turning “calling in” into something teachable, drawing on five decades of organizing. Her TED talk is about fifteen minutes and a good place to start. Her book Calling In: How to Start Making Change with Those You’d Rather Cancel (Simon & Schuster, 2025) develops a five-part continuum of responses (canceling, calling out, calling off, calling on, and calling in) with practical guidance on when each one fits.

On online harassment and digital safety: Each of these is most useful when you read it before you need it. They cover overlapping ground in different registers, so it is worth knowing which is closest to your situation.

- PEN America’s Online Harassment Field Manual: written for writers, journalists, artists, and activists, particularly those who are women, BIPOC, or LGBTQIA+. What makes it distinct is that it is organized by your role in the situation, whether you are being targeted, witnessing someone else being targeted, or running an organization where staff is, rather than as one undifferentiated guide. (This resource appears to have geo restrictions.)

- Games and Online Harassment Hotline Digital Safety Guide: originally written by Jaclyn Friedman, Anita Sarkeesian, and Renee Bracey Sherman, all of whom had been targeted themselves. It is the most granular of the three on specific tactics like doxxing prevention and hate raids, and it is especially direct about how well-meaning allies can inadvertently amplify harassment by engaging on a target’s behalf. The hotline itself closed in October 2023, but the guide is still online.

- EFF’s Surveillance Self-Defense: the deepest of the three on technical infrastructure (encryption, secure messaging, device security, network circumvention). The “Security Scenarios” section lets you pick a situation that matches yours (activist, journalist, abortion access worker, LGBTQ youth) and follow a tailored learning path rather than starting from scratch.

On activism, organizing, and community safety more broadly: The Commons Social Change Library is the broadest of the resources in this section. Run by movement librarians in Australia, it curates over 1,500 free, openly accessible materials from movements around the world, organized into collections on campaign strategy, community organizing, working in groups, justice and diversity, and creative activism. Where the resources above are sharply focused, the Commons is where you go when your question is harder to name in advance.

A Minute from the Moderators

Welcome to February!

For both this month’s and next’s Moderator Minutes, we’d like to spend some time discussing Community Care in Online Spaces: what it looks like, why it matters, and how the ways we engage with each other can either cultivate or corrode it.

What Is Community Care?

Community care, in its most tangible form, tends to manifest as in-person support services. Body doubling for errands, welfare check-ins, shared meals. The kind of reciprocal sustenance that keeps people and neighborhoods intact. While we are not in person here, the underlying ethos translates directly to digital spaces. The way we center our intentions is the same; it is the modality that differs.

Community care in an online context is the practice of centering collective wellbeing in how we engage with each other. Not merely what we say, but also: how we interpret, how we respond, and how we protect. It encompasses the choices we make when we encounter something that confuses us, frustrates us, or frightens us. It is, ultimately, the difference between reacting and responding.

Why Now?

We have noticed an increase in certain patterns appearing in our reports and as well as the broader Fediverse. They tend to cluster into a few categories: people having difficulty expressing or maintaining their boundaries, people having difficulty understanding and respecting the boundaries of others, people struggling to de-escalate conflict, changes and reinterpretations of historical norms and phrases, and an increase in reported posts (on and off Hachyderm) that start to touch on our “No Violence” rule.

We are not going to address all of these in a single post. This month, we are focusing on norm shifts: how they happen, why they matter, and how they can be both a force for care and a vector for harm. In our next Moderator Minutes, we will address some of what drives all of us toward confrontation and what it means to channel that energy safely. Boundaries and de-escalation will follow after that.

These patterns are not unrelated phenomena, and some of them have been building for years. They share a common root: the compounding stress of navigating spaces where the rules of engagement are shifting under everyone’s feet, incumbents and newcomers alike. When our reserves are depleted, our interpretive generosity contracts. Our fuses shorten. Our impulse to correct, rather than connect, intensifies. All of this is understandable, and much of it is even admirable in its origin. But the downstream effects are creating friction and, in some cases, genuine harm within our community.

We want to walk through this pattern. Not to admonish, but to illuminate. And as you read, we’d like to ask you to hold two questions:

- When you encounter a norm violation, how do you tend to respond?

-and- - Has it been working?

Shifting Norms and Maladaptive Responses

When Communities Collide

Norms collide whenever a community changes substantially in size or composition. For the Fediverse, 2022 was a major inflection point. People leaving The Twitter That Was arrived in droves, and with them came an entirely different set of internalized expectations about how to engage on social media.

The existing Fediverse had, over years, developed norms around content warnings, alt text, hashtag usage, thread etiquette, and more. These norms were often unwritten, learned through participation in comparatively small communities where people knew each other. When the population grew by orders of magnitude, those norms didn’t scale with it. There was no infrastructure to onboard newcomers into them. The new arrivals were not being taught; they were being expected to already know.

The response from much of the incumbent population was, understandably, frustration. People who had built something felt it was being overrun. But frustration without a constructive channel tends to curdle. It can stop us from seeing other choicees, and may leave us feeling like there were no choices at all. Justified burnout and ire lead to what some have come to call a “Fedi HOA” culture: hair-splitting interpretations of norms that felt, to those on the receiving end, like their intentions and learning path were being borderline weaponized.

For example, many of us have seen situations where a conversation unexpectedly escalated in visibility, and rather than reaching out to the relevant moderation teams to de-escalate, entire instances were blocked. Sometimes the mods (or admins) themselves were involved in the conflict itself. These scaling problems were felt as acts of aggression. These by type may be a more dramatic example, but the everyday version is equally as pervasive: people being yelled at for not using content warnings rather than being asked to use them. The newcomers were not told what was wanted from them. They were punished for not already knowing. Incumbents were exhausted, trying to bring newcomers into the fold. And on and on the wheel turned.

Here is the uncomfortable question: did all of this work?

If what we tried had been effective, we would now have 100% consistent usage of content warnings, alt text, and hashtag consistency across the Fediverse. We do not. The behavior that felt right, maybe even righteous, of correcting people loudly and publicly, did not produce the outcome it was meant to produce. It produced a population of newer users who were socialized to expect abuse rather than receive guidance. And when newcomers learned to ignore the torrent, they also missed the message.

The Patterns That Persisted

Some of the maladaptive engagement patterns that emerged from this period have calcified into features of how people interact on the Fediverse today. We want to name two, because they illustrate how legitimate needs can generate responses that don’t actually serve those needs.

The first is inconsistent expectations around what degree of diligence someone should read up on all other parties in a thread. People carry a considerable amount of energy into interactions with the presumption that others should have reviewed their biography, their pinned posts, their history before engaging with them at all. And when someone interacts without that context, those who feel unseen and unheard often treat others as if they have been personally affronted.

The underlying need is real. People want to be understood. They want their context to be respected. But expecting every person who encounters your posts to research your biography before interacting is not scalable. It never was, and it certainly is not now. Being angry at an individual for failing to do something that people do not consistently do directs frustration at individual people for a systemic impossibility.

The second is the rise of tools like Do Not Reply cards. These exist to solve a genuine problem: someone posts a question to the public internet, say, “people who have experience with $TOPIC, I’d like to ask…” and everyone answers, including people without the relevant experience. The responses are unhelpful, the poster is overwhelmed, and the signal is drowned in noise.

The maladaptive solution: cards that tell people not to engage with your public posts. Essentially: as a community, we have started posting on the public internet to tell people not to interact with us.

We say this not to mock the impulse. The frustration is legitimate, and the boundary is reasonable. (We even talk about it in our Docs.) But the mechanism is not working as well as people need it to. It is an expression of frustration dressed as a solution. It does not build the connective infrastructure that would actually address the underlying need. And it normalizes a posture of preemptive hostility toward engagement itself, the very thing that online community is built from.

If you use these tools, or if you carry the expectation that others should research you before engaging: we are not asking you to stop having those needs. We are asking you to sit with the question we posed earlier. Is it working?

The Smallest Scale

The same pattern plays out interpersonally. We were recently privy to a situation on the Fediverse involving attribution norms where the phrase “stealing to share” was used. The parties involved interpreted the situation differently, the interaction escalated, and multiple moderation teams became involved. We are not going to recount the specifics or adjudicate who was right, because the specifics are not the point.

What we want to talk about is the pattern. These types of interactions, where a norm in transition meets people with different contexts and expectations, tend to follow a predictable trajectory. Someone perceives a violation. They respond with accusation or correction. The other person, feeling attacked, becomes defensive. The defensive response is read as doubling down. Escalation follows. By the time it’s over, neither party has gotten what they actually needed, and the original issue (in this case, attribution) has been buried under layers of conflict.

This trajectory is not inevitable, but it is common enough to be worth examining. Accusation, even when justified, activates self-protection. Self-protection is not understanding. And understanding is what is actually needed for someone to change their behavior. If the goal is for people to share with attribution, then the response that serves that goal is the one that communicates it clearly: “please credit me when you share.” The response that is least likely to achieve that goal is the one that triggers a defensive reaction and ensures the person never hears the underlying request at all.

This does not mean that the person who feels wronged is at fault for the escalation. Sometimes you communicate clearly, you make a reasonable request, and the other person still doesn’t hear you. When that happens, that is on them. You can choose other tools that available to you at that point that are not esclatory, such as: disengagement and contacting moderation (reporting the behavior, contacting the relevant moderation team). We will talk more about this in our next Moderator Minutes, but we want to name it here: choosing community care does not guarantee care in return. It is still, in our view, the better choice. Not only because it is more likely to produce the outcome you want, but because the practice of choosing connection over punishment is itself part of building the community we believe that we all want to live in.

Two Reactions

Back to the topic at hand, across all of these scales (the ecosystem-wide migration, the persistent patterns, the individual interaction) there are two common response patterns:

Reaction A: correct and punish. Yelling about content warnings. Using content moderation as a tool for retaliation, via blocks and so forth. Treating colloquialisms as transgressions. Responding to perceived norm violations with accusation rather than communication. These responses share a common thread: they express what someone doesn’t want without communicating what they do want. They create conflict in the space where connection could have been.

Reaction B: connect and guide. Reaching out to moderation teams to help onboard newcomers and de-escalate conflict. Telling someone directly what you need from an interaction rather than punishing them for not intuiting correctly. Responding to “I’m going to steal this to share!” with “please credit me when you share with your friends!” Sending requests to moderation teams to please talk to their member about respecting boundaries. These responses share a different common thread: they lead with intention. They identify the actual gap and offer a path across it.

Reaction B is community care. Reaction A, however understandable its origins, is not.

We are not suggesting that Reaction B is easy, or that it should be the default in every circumstance. There are bad-faith actors for whom connection is not the answer, and we will address that directly in our next Moderator Minutes. But for the vast majority of these collisions we see, the person on the other side of the interaction is not acting in bad faith. They are navigating norms that are shifting under their feet, just as you are being exhausted by the same.

When Norms Become Weapons

The dual nature of shifting norms carries a harmful corollary that we need to name explicitly. One of the ways that queer and Black people experience harassment online is not always through direct hostility (slurs, threats, overt bigotry). It is through the selective and disproportionate enforcement of rules and standards.

What this can look like: if a rule exists, queer and Black community members must abide by it more scrupulously, and will be held accountable more harshly for perceived transgressions. The examples above are relatively benign, misinterpretations and scaling failures. But the same mechanism scales toward something that is used as a vector for harm. When norms shift, they do not shift uniformly for everyone. The same behavior that is overlooked or forgiven in one person becomes grounds for a report when performed by someone from a marginalized community. The rule itself may be neutral in its text. Its application is not.

We want to be clear: this is a form harassment. It may not look like a slur, but holding queer and Black people to a different, and often impossible, standard is a form of harm that is both pervasive and difficult to name, precisely because it cloaks itself in the language of fairness and rule-following.

We raise this here because it is inextricable from the conversation about norms. As our community develops and refines its standards for engagement, we must remain vigilant about who those standards are being applied to, and whether the application is equitable. Rules are tools. Like all tools, they can be used to build or to bludgeon.

Choosing How We Respond

We have spent much of this post describing what can go wrong. We want to close with what can go right.

The question we asked you to carry, is it working?, is not meant to shame anyone. It is meant to open space for a different kind of honesty. Many of us have invested significant emotional energy in behaviors that feel right but are not producing the outcomes we want. That is not a moral failing. It is an invitation to try something else.

Reaction B is not just a better response to any single situation. It is a practice. It is the habit of leading with what you want. The habit of assuming the person in front of you is capable of growth rather than deserving of punishment. The habit of asking yourself, before you hit “post,” whether your words are building the community you want to inhabit.

This is not naivete, and it is not asking you to extend infinite grace to bad-faith actors. That is a different conversation, and one we will address next month. It is asking you to extend considered grace to the people around you who are, like all of us, navigating norms that are shifting under their feet.

What if we chose now to stop engaging in behaviors that are not, or no longer, serving us and instead invested in trying new ones that might?

A Question for You

We’d like to close with something for the community to sit with:

When you encounter a norm in transition (something that used to be fine but is now contested, or something that used to be unacceptable but is gaining new context) how do you decide how to respond? What helps you lead with curiosity rather than correction?

We’d love to hear your reflections. Last Moderator Minutes we started a discussion via a Github Issue, this time we’ll actually point you to our Discord‡. In our next Moderator Minutes, we will be discussing what happens when the impulse to act on something distressing meets the realities of online safety, and how community care applies when the stakes are higher than the examples we explored here.

As always, processes grow over time, and this conversation is no exception.

‡ Discord: we’re actually in the process of migrating out of Discord to Zulip! We’re not doing the final leg of the proces until the moderation team feels appropriately comfortable and confident with the new tools, though it’s probable that future discussions will be in Zulip so stay tuned.

A Minute from the Moderators

How we can support our communities

Hello and welcome to the new year! I hope everyone was able to find sources of rest and recouperation towards the end of the year. As we kick things off, I’d like to talk about community support.

As technologists, we care about the open source ecosystem. As people invested in decentralized spaces where others can connect and grow, we care about the sustainability of the platforms that bring people together.

Something I’ve been seeing across all of these fronts is the rise of burnout.

Burnout creates its own forcing function. As ecosystems shrink, fewer people remain to carry things forward. If the work doesn’t also change to accommodate that, then each remaining person’s task load grows. This means that burn out can spiral, and spiral faster.

I see more people discussing concerns that their third spaces are shrinking or disappearing. In the physical world, some of this was driven by the pandemic. Smaller gathering places like cafes and community centers struggled when people couldn’t meet in person, and not all of them recovered. Surveys find that roughly half of adults now report experiencing loneliness, with young people seeing an especially sharp drop in time spent with friends.

In online spaces, we see something similar, but perhaps with a different cause. As people feel greater economic uncertainty at work or greater demands at home, we’re all less likely to give of ourselves to community spaces—not because we don’t value them, but because we simply don’t have it in us.

But: what if we did (have it in us)?

I want to pause here for a few moments of reflection.

First, I want you to remember moments when you felt the most joy. These don’t need to be work-related, or even open source / volunteer org related. Anything in your lifetime, no matter how long or fleeting. Think of past conversations, places, or other moments. Whatever comes to mind, just take your time.

Now, I want you to look for what your moments of joy have in common. Was it connection? A sense of accomplishment? Being seen, or seeing others clearly? Feeling part of something, or feeling free of something? Being able to relax and let your guard down? Make a mental note of any common internal experience you have amongst them.

Now, a second set of thoughts. This time about projects, communities, or other volunteer work. While for many of us here on Hachyderm there may be open source / tech communities in here, I want to emphasize that you do not need to constrain on these unless you wish to. This question is intentionally as broad as you need it to be.

One last reflection exercise: how have things been lately, in your honest assessment?

Have you wanted to do something you couldn’t? More time with loved ones, time to read, to feel the sun or touch grass? Do any of you feel like you might have “time”, in terms of minutes on a clock, but not “the time”, as in no emotional space inside of you?

If any of this resonates, you’re not alone. You’re not failing. A lot of people are sharing similar thoughts and feelings. Feeling like they take actions that don’t have results. Working hard with no, or insuffient, outcome. Participating in community but not feeling connected.

Why this exercise?

The reason I wanted everyone to go through this exercise is to help us all remember that we’re not alone. And that while we on Hachyderm, and many online spaces, welcome volunteers that it helps us help you if you are able to tell us your wants and needs.

When you want to volunteer somewhere, or just generally participate in a community in some way, The Place usually knows What They Need from you. In the case of a social media instance, these are usually tasks that support people supporting each other (handling reports, disagreements, etc.) or support technology that supports people (software updates, server maintenance, security, etc.).

Collaborating together means being able to discussing the intersection of “skills you bring” with “meeting community needs”, but also being able to communicate what brings you joy, and when you need rest, especially in times like what we’re all currently experiencing.

To the point: stepping up to volunteer your time is a gift to others. And being able to know when you need to step back and/or ask for change is a gift to yourself.

Both of these are legitimate. Neither is failure.

Stepping up

Volunteer labor is measured in seconds and spoons.

There are a lot of calls for action out there, and it’s both possible and likely that there are more situations you wish you could share your skills and time with than you’re actually able to. This is okay. It’s a good opportunity to learn more about yourself and to practice having boundaries. After all, a sustainable you is better than a you that’s spread too thin and burning out.

When you’re assessing whether to join a new effort, ask questions of yourself first. Not just “how much time can I devote per week,” but “what do I need to know” so you can better determine how much you can give and whether the organization’s needs match your skills.

Not all time is created equal. As someone who used to take on-call rotations, I used to joke that one minute of incident time is one year of real life. The stress is higher, the pace is higher, everything takes more. There’s a reason organizations with healthy incident response culture ensure people can take time to themselves after a shift with an incident—even if they were scheduled to take that shift.

As you better understand how much time and emotional energy is needed from you, you can not only better decide your capacity but also better communicate the boundaries you need.

Stepping back

Stepping back doesn’t have to mean dramatic exit. It can mean doing less, doing differently, taking a defined break, or shifting into a different role. The key distinction is to step back with intention instead of collapsing from exhaustion. Making choices with intention not only helps you, but also helps give information to the community (or organization) that you’re working with.

If you find yourself overcommiting because you’re worried as you watch your communities struggle: that is absolutely a hard choice. But it helps both you and others to say “not right now” or “not this”. The spaces you’ve helped build do not require your depletion as the price of membership or community. (And honestly, if they did would you want to participate in that environment?)

In the broader open source ecosystem, there’s even data around this. Tidelift surveys show a consistent 60% of open source maintainers either have quit or consider quitting. This means it’s not individual weakness, it’s something we can choose to normalize doing with intention, communication, and without shame, so that communities can adapt rather than being caught off guard.

Asking for change